Kubernetes and Wireguard: CNI vs VM

Introduction

Some time ago I wrote a blog post about creating a mesh network and I used as an example of k3s cluster that I built spanning three different data centres. A few days ago, a colleague of mine asked me why I didn’t use the native implementation of Wireguard that k3s supports.

That’s a brilliant question. I have done that multiple times with both k3s (flannels) and multiple other Kubernetes clusters using Calico’s wireguard support. There is a time and place for each of them, let’s have a look.

What’s a CNI

In Kubernetes, the Container Network Interface (CNI) is the plugin system that connects pods and nodes on a virtual network. A CNI plugin assigns IP addresses to pods and configures the routes so pod-to-pod and pod-to-service traffic can flow across the cluster. Different CNIs (like Flannel, Calico, or Cilium) implement this differently and may add features such as encryption, network policy, or eBPF-based routing.

I won't compare CNIs here. There are too many implementations.

For most use cases, the choice makes little difference; however, for large clusters or when specific features are required, the selection matters. Cloud providers such as Google and AWS also provide vendor-specific CNIs.

The matter we’re discussing today is encryption with Wireguard.

What’s the difference then?

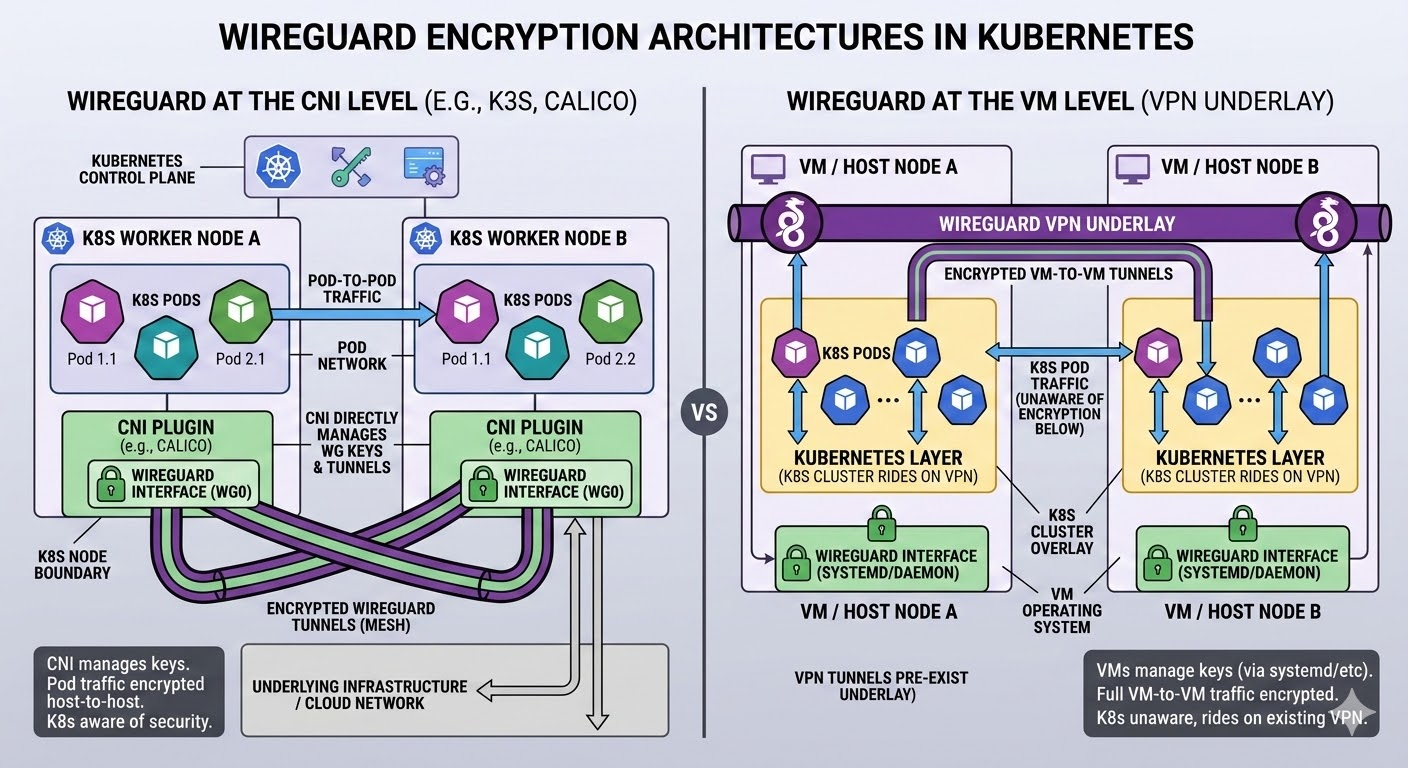

The main difference is what traffic you encrypt and who manages the tunnels: wireguard-native (flannels, calico, etc) encrypts the Kubernetes pod network at the CNI layer, whereas pre-building WireGuard on the VMs gives you a generic VPN underlay that k8s just rides on.

Most Kubernetes CNIs that integrate WireGuard (Cilium, Calico, etc.) are designed to encrypt inter-node pod traffic, i.e. pod↔pod traffic that leaves the node and traverses the underlying network.

- Cilium’s WireGuard mode creates a tunnel between nodes and “encrypts all traffic that flows to other worker nodes” from pods.

- Calico’s WireGuard support similarly forms an encrypted mesh between nodes for workload traffic.

- Flannel's implementation is simpler and more limited, primarily as a backend for overlay networks like in K3s.

In most common setups, the CNI’s WireGuard integration does not automatically encrypt traffic between worker nodes and the Kubernetes control plane (API server, etcd, cloud control plane endpoints). The control-plane traffic is normally protected via TLS (Kubernetes API server TLS, etcd TLS, cloud LB TLS), not by the CNI’s WireGuard.

Practical differences

- Simplicity vs flexibility: wireguard-native is simpler and more integrated if you only care about securing intra-cluster traffic; a pre-built WireGuard network is more flexible if you also need encrypted access for non-k8s services or multi-purpose overlay networks.

- Failure domains: with wireguard-native, if k8s or the CNI is unhealthy the VPN used for pod traffic is also affected; with an external WireGuard underlay, the VPN can stay up even if k8s is having a bad day.

- Observability and debugging: external WireGuard is standard

wg/wg-quicktooling, logs and interfaces that you own; wireguard-native creates its own cni-managed interface (for exampleflannel-wg) that you debug via both flannel and WireGuard tooling.

What should you use?

This is a multi faceted question. I would go as far as saying you should use one of them always. But which one, depends of your level of risk, your servers location, the data you’re transmiting, whether you are using a service mesh (linkerd, istio) that’s already encrypting the traffic, non-k8s traffic… etc

If you were to be asking me about your individual needs, I would never be able to answer the question without a full understanding of your platform, but I can give you a few scenario considerations.

Complexity

Perhaps the biggest drawback of VM level wireguard encryption is the complexity. You will become responsible of managing the wireguard keys, routing, etc. However, if you decide to use a native implementation at the CNI level, this is taken care of for you.

Don't let the perfect be the enemy of the good

Public IPs

Do your nodes use public IPs? If the answer is yes, I would most certainly recommend a VM level Wireguard. This is because you will be sending important traffic over the internet. Yes, a lot of it will be encrypted with TLS but, can you risk it?

Multi Cloud

The same answer as before, really. If you’re doing a multi-cloud mesh, you’re likely sending data over the public internet.

Private Network

If you are entirely in a dedicated private network and your internet access is controlled by NAT & Egress Firewall, you can go with the native implementation. It is simpler to implement and most of security needs will be covered.

lab: k3s with wireguard-native

Before I go, let me show you how easy it is to set up Wireguard with k3s as a reminder that at the very least you should be looking at CNI level encryption.

- Install wireguard on all the nodes

1apt update && apt -y install wireguard- Initialise the control plane node

1curl -sfL https://get.k3s.io | \

2 K3S_KUBECONFIG_MODE="644" INSTALL_K3S_EXEC="--flannel-iface=enp7s0 \

3 --flannel-backend=wireguard-native" sh -- Add the worker nodes

1curl -sfL https://get.k3s.io | \

2 K3S_TOKEN="xxxxxxx::server:b05e3f66d60321adc11dffee52a289b8" \

3 K3S_URL=https://10.13.1.1:6443 \

4 K3S_KUBECONFIG_MODE="644" INSTALL_K3S_EXEC="--flannel-iface=enp7s0" sh -Once your cluster is up and running, the only thing you’ll notice different is the new NIC for the encryption

1~# ifconfig flannel-wg

2flannel-wg: flags=209<UP,POINTOPOINT,RUNNING,NOARP> mtu 1370

3 inet 10.42.0.0 netmask 255.255.255.255 destination 10.42.0.0

4 unspec 00-00-00-00-00-00-00-00-00-00-00-00-00-00-00-00 txqueuelen 0 (UNSPEC)

5 RX packets 101 bytes 4972 (4.9 KB)

6 RX errors 0 dropped 0 overruns 0 frame 0

7 TX packets 18 bytes 1592 (1.5 KB)

8 TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0Closing thoughts

Security is a design choice, not a bolt-on. Whether you encrypt at the CNI layer or run WireGuard at the VM layer, you are making a security decision first and a networking decision second. Treat all data in transit as sensitive by default, prefer authenticated and encrypted channels, and revisit your threat model, compliance obligations, and operational trade-offs regularly.

Encryption should never be optional; it is part of the platform’s contract from cluster bootstrapping to day-2 operations. At digitalis.io we support multiple organisations in the financial sector and perhaps because of that we have grown accustomed to design any platform with security first in mind.

I, for one, welcome our new robot overlords